Introduction

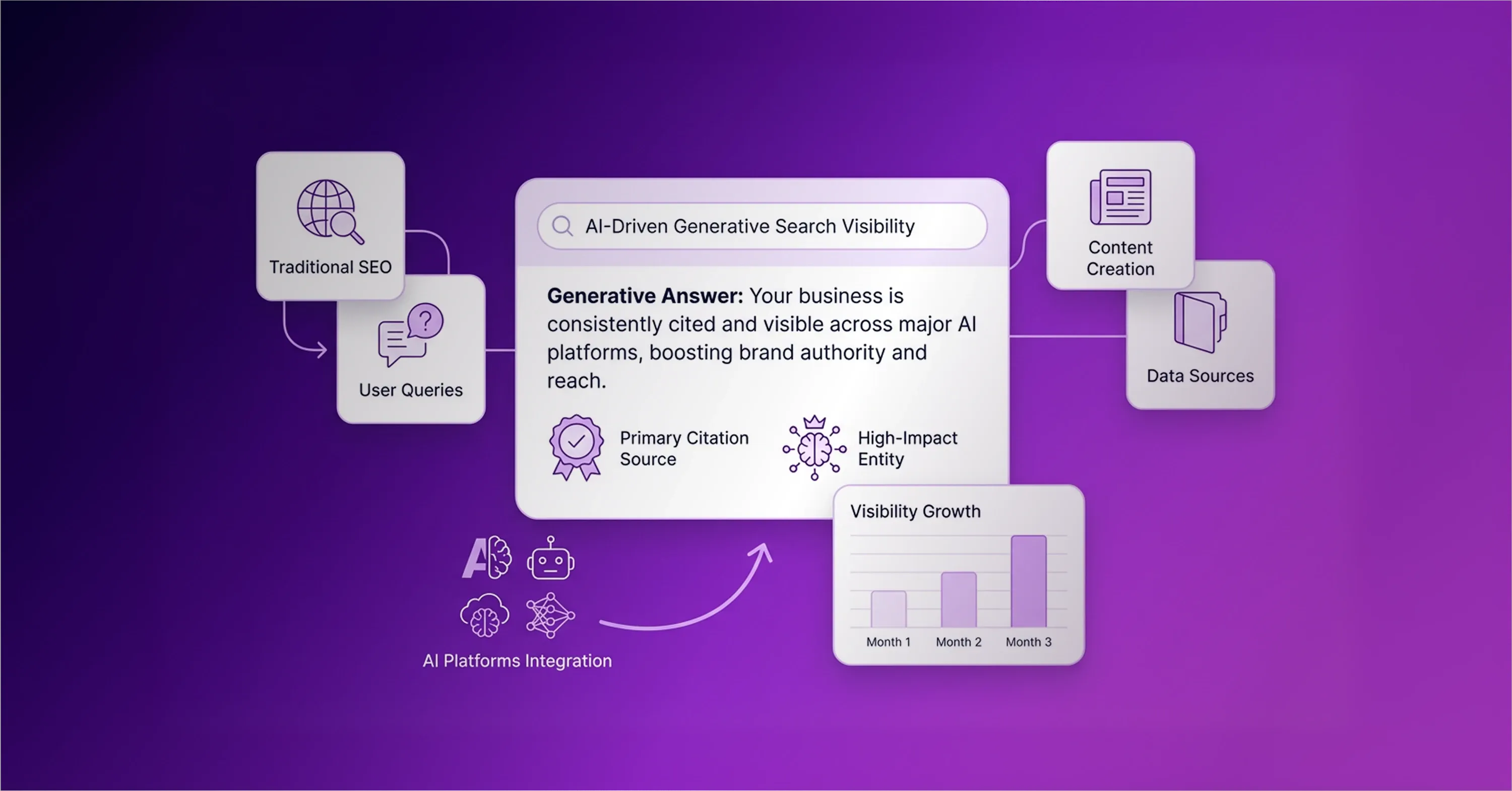

Businesses assemble an AI marketing toolkit to generate content, but often ignore the technology that helps AI search engines find that content. Generative search platforms synthesize data and answer user queries directly. Over the past year, AI search traffic grew 7x, and this shift reshaped buyer research behavior. However, traditional search engine optimization tools evaluate keyword density and blue-link rankings rather than brand citations in large language models. Companies face a significant problem when they rely on legacy software because these tools cannot accurately measure how often platforms like ChatGPT or Perplexity recommend their products. Measuring brand visibility across generative platforms requires a dedicated stack focused specifically on large language models. An updated technology stack monitors brand mentions and identifies semantic gaps across multiple platforms. This article explores how to build a lean visibility workflow that translates data into targeted public relations strategies.

Creation Versus Visibility Illusion

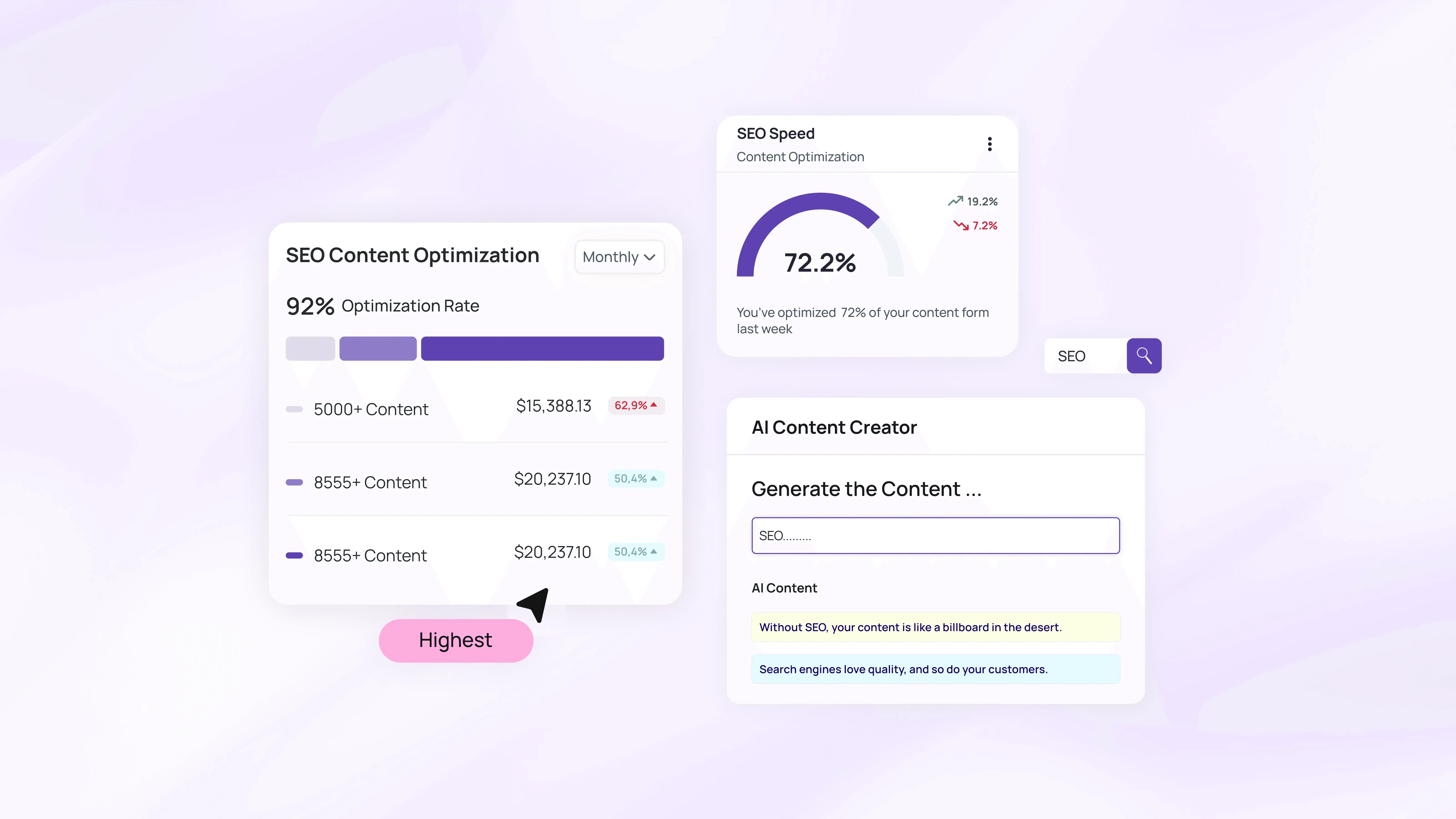

Many companies try to build this visibility workflow through large article volumes, believing this production guarantees brand citations from artificial intelligence platforms. These organizations add content generators to their AI marketing toolkit and expect immediate results. Content generation software produces text rapidly. However, this software cannot track how language models process information and evaluate underlying concepts before they deliver an answer to the end user. Organizations need certainty when they invest in digital campaigns. Creating more blog posts does not force a generative engine to recommend a specific product. Traditional search engine optimization (SEO) strategies rely on keyword density and backlinks to rank web pages. These legacy tactics fail in generative search environments because language models synthesize answers directly. The lack of visibility tracking software leaves companies with no assurance that their generative strategies actually work. According to a Yext report, fewer than 26% of organizations plan content that captures artificial intelligence citations. Companies focus heavily on production speed and ignore the metrics required to measure performance. Businesses require specialized optimization tools to monitor how language models perceive and reference their brands. Organizations maintain their market presence when they shift their focus from raw output to measurable visibility.

Software Platform Overwhelm

Businesses often drain their budgets when they experiment with dozens of disconnected platforms to track measurable visibility. A recent survey from Stacked Marketer found that 58% of respondents feel overwhelmed when they manage daily channels and digital instruments. The same data indicates that the average corporate team currently uses 11 tools. This number will likely reach 16 tools within two years. When companies add more applications to a bloated marketing toolkit, they do not improve search performance. Instead, these organizations lose trust in their analytics because they have to piece together fragmented data from multiple dashboards. Companies avoid this platform overwhelm when they adopt a lean software stack that focuses on measurable outcomes. A simplified workflow requires only a few core applications. These systems monitor artificial intelligence platforms and identify missing semantic information. Businesses approach their campaigns with conviction when they rely on precise visibility data rather than vanity metrics. When companies drop useless subscriptions, they free up their budgets to invest in targeted search engine visibility strategies. A lean technology stack translates raw citation numbers into actionable public relations plans, helping companies secure brand mentions faster.

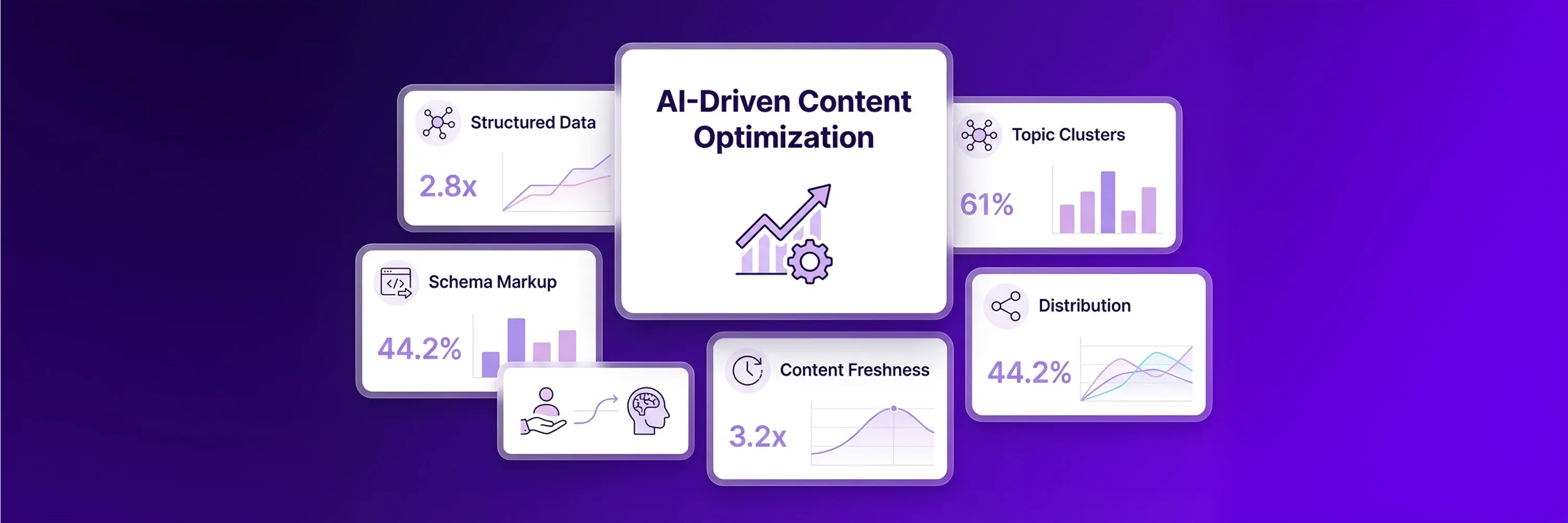

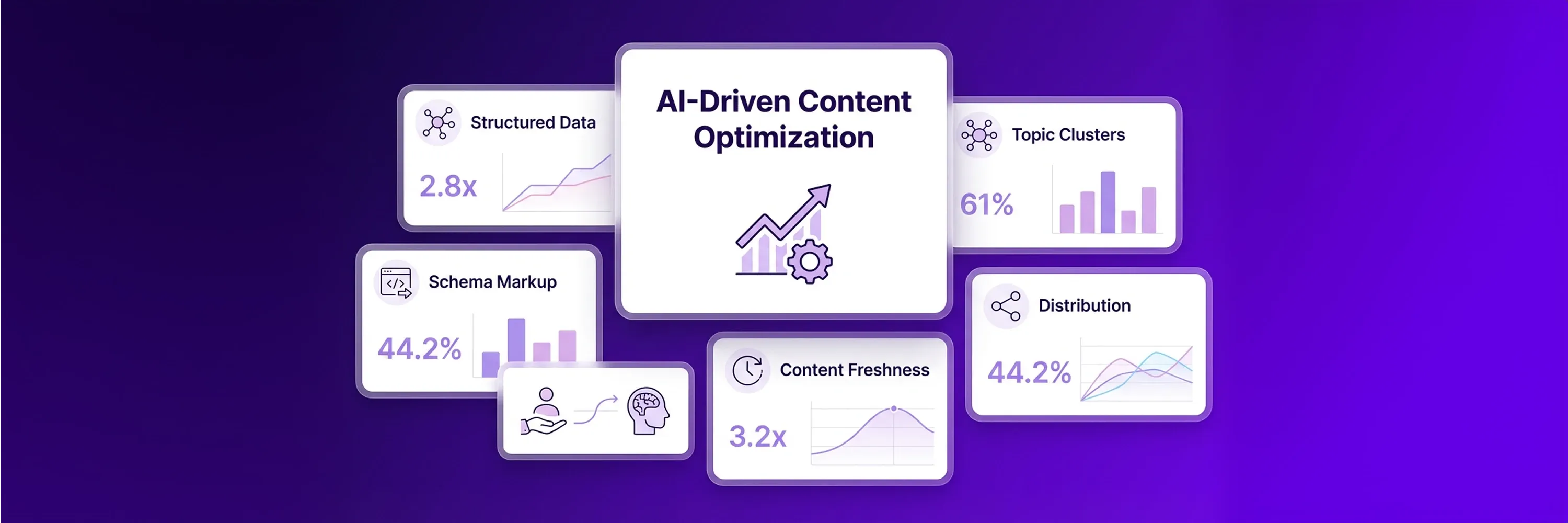

AI Marketing Toolkit For Visibility

A lean technology stack for Generative Engine Optimization (GEO) requires entirely different software infrastructure than traditional organic search. Companies build their AI marketing toolkit around specialized platforms that interact directly with language models. Industry analysts from RankWeave confirm that traditional SEO tools fail to track artificial intelligence search engines. Legacy analytics evaluate static rankings on search engine results pages, but they cannot measure conversational responses. Organizations establish a foundation for accurate measurement when they implement a dedicated AI stack that captures dynamic citations. A visibility-focused infrastructure includes brand mention trackers, semantic gap analyzers, and entity resolution platforms. These specialized tools evaluate the strength of a brand's digital presence across multiple generative platforms. Companies achieve greater certainty in their reporting because they track actual answer nugget density instead of estimated search volume. This modernized software architecture helps businesses locate the specific queries that trigger their brand names in machine learning models. Organizations secure these digital recommendations when they monitor their performance with a generative optimization checker that interprets semantic relationships.

Mention Trackers Across Generative Engines With AI Stack

A generative optimization checker works within a dedicated AI stack that monitors brand mentions across various artificial intelligence platforms simultaneously. These trackers query language models daily to document exactly when and how a brand appears in synthesized answers. Companies use this data to calculate their share of artificial intelligence voice compared to their direct competitors. Organizations understand their current market position when they measure citation frequency, and this measurement provides assurance that their public relations campaigns generate results. Visibility trackers capture three critical data points during this process:

-

The specific platform that recommended the brand

-

The exact user prompt that triggered the citation

-

The sentiment that accompanies the brand mention

This automated tracking replaces manual searches and gives businesses a precise benchmark for their optimization efforts.

Semantic Gap Identification

Semantic gap analyzers evaluate a company's digital footprint and identify missing information that prevents language models from citing a brand. These diagnostic tools compare a company's web content against the semantic knowledge base of major generative search engines. The analyzers highlight specific topics and facts that the brand has failed to publish online. Companies add these gap identification platforms to their marketing toolkit to uncover blind spots in their content strategies. When organizations publish the missing information, they bridge semantic gaps and improve their overall content relevance. Language models establish trust in a brand when they find detailed and factually accurate information across multiple digital channels. Companies use these insights to write targeted articles that answer the exact questions generative engines struggle to resolve.

Entity Relationship Resolution

Entity resolution platforms establish clear connections between corporate brands and specific industry topics. Language models process the internet as a web of distinct entities rather than a collection of keywords. Organizations use entity resolution software to provide structured data that artificial intelligence engines can easily interpret. This software maps relationships between a company, its products, its key executives, and its operational categories. A modernized technology stack relies on this mapping process to feed machine-readable information directly to generative platforms. Businesses write their technical documentation with conviction because they know entity resolution tools translate their content into the correct semantic format. When language models understand how a brand relates to a specific subject, they recommend that brand more frequently in conversational responses.

Measurement Workflow Architecture

Companies capture frequent brand recommendations when they integrate visibility tracking tools into daily operations and eliminate data silos. These companies build an AI stack to monitor how different language models process specific brand information. This monitoring evaluates the strength of ongoing digital public relations campaigns. The data reveals inconsistencies. A Superlines analysis shows that AI platform citations vary 615 times between platforms for the same brand. Because citations vary across language models, companies need a centralized marketing toolkit to track these discrepancies accurately.

Retailers use these tracking insights to improve their AI visibility for e-commerce recommendations across generative search systems. These businesses determine which topics matter most to language models. Companies follow a clear operational process to translate raw tracking data into publishing strategies:

-

Extract query data from the semantic analyzer daily.

-

Compare competitor mentions across different language models.

-

Publish targeted articles that address identified knowledge gaps.

-

Update technical documentation to clarify product specifications.

This structured process provides certainty about daily publishing priorities and shapes the upcoming public relations strategy.

Return On Artificial Intelligence Mentions

Organizations track specific campaign metrics to measure the return on investment from artificial intelligence mentions. These organizations understand that traditional ranking positions lack accurate performance data for generative search platforms. Companies rely on an updated AI marketing toolkit to evaluate share of voice and assess citation sentiment. They know that language models build trust when they consistently reference a brand positively across multiple conversational responses. These positive citations eventually drive sales.

An Exposure Ninja study found that AI search traffic converts at 14.2%, while traditional organic search converts at 2.8%. This conversion gap creates an advantage for optimized brands. A Semrush study confirmed this trend and found that AI search visitors are worth 4.4 times more than traditional organic visitors. Organizations capture this converting traffic when they measure their visibility performance accurately. Companies perform specific actions to quantify digital visibility:

-

Calculate the brand's share of voice against three primary competitors.

-

Score the citation sentiment across all generated answers.

-

Track the exact conversational prompts that drive website visits.

-

Connect traffic data to specific revenue outcomes.

These performance metrics show how the visibility strategy directly impacts financial growth.

Conclusion

Companies move past traditional ranking metrics when they adopt a specialized AI marketing toolkit that measures AI search citations. These companies cannot use legacy SEO software to track conversational mentions or evaluate how language models perceive brand entities. They need accurate data to understand their actual market presence across different generative platforms. These organizations will maintain an advantage as search engines continue to prioritize synthesized answers over static links. Businesses will rely on precise citation data to secure brand recommendations and capture high-intent buyers. Audit the current AI share of voice and establish a baseline for the upcoming strategy with an SEO ranking checker that analyzes generative platforms.