Introduction

Generative artificial intelligence search systems have fundamentally altered how users discover information online. Historically, traditional search engines indexed content and provided users with directories of blue links to evaluate. Today, platforms synthesize data and generate direct answers that satisfy user queries immediately. This shift forces organizations to adapt their digital presence, as Google AI Overviews reduced organic clicks by 42% across a portfolio of 64 sites. Brands must structure their content for machine readability and secure external validation to maintain traffic. Traditional keyword stuffing fails because large language models evaluate the credibility and context of the information they extract. Strong AI marketing strategies prioritize topical authority and proper formatting over legacy algorithmic manipulation. This guide details the specific tactics necessary to build citation authority and maintain brand visibility within modern generative search platforms.

Shift From Algorithmic Rankings to Citation Authority

Organizations must understand the evolution of search mechanisms to build this citation authority. Traditional search engines ranked web pages based on keyword density and backlink profiles. Today, artificial intelligence retrieval systems require a different approach. Traditional keyword metrics do not influence these new retrieval systems in the same way. Language models process information by evaluating the context and credibility of the source material. This shift forces organizations to adjust their AI marketing strategies toward building citation authority. When search engines generate answers, they look for verifiable facts from established entities. Brand visibility now depends on how often a language model extracts and references specific company data.

Trust signals shape these machine-generated recommendations. Systems evaluate domain expertise and external validation to determine which sources provide the most accurate answers. Organizations build this authority when they publish proprietary research and earn mentions from respected publications. This validation gives artificial intelligence platforms greater confidence in citing a specific brand. Organizations that establish this authority capture highly qualified audiences.

According to a Microsoft Clarity study of publisher sites, artificial intelligence traffic converts three times faster than traffic from other traditional channels combined. These high conversion rates occur because users receive direct answers that address their specific intent. Consequently, an effective AI growth strategy focuses on supplying language models with verifiable data. Companies that master this transition achieve a new level of certainty in their organic search performance.

AI Marketing Strategies for Data Generation

Companies secure this organic search certainty when they supply language models with new information. A services-first approach helps companies build campaigns that generate valuable proprietary data. Organizations must publish original findings rather than summarize existing information. This approach establishes credibility within artificial intelligence search systems. Language models do not just repeat information. They synthesize facts from primary sources. When companies conduct surveys or analyze industry metrics, they create unique data points that conversational interfaces readily extract. If a brand publishes exclusive industry statistics, extraction engines will cite that brand as the definitive source.

Organizations that implement generative engine optimization tactics layer this proprietary data throughout their digital properties. They structure their content by placing high-level summaries at the top and detailed methodology below. This information architecture helps algorithms verify the context of the data. Unique data points strengthen brand positioning because they force generative systems to reference the original creator. A recent Yext analysis shows that original research generates 4.31 times more citations from artificial intelligence platforms per URL than competitor listings.

Original content production requires efficient internal processes. Companies use marketing automation to gather customer insights and transform raw numbers into publishable reports. These automated systems streamline the research phase. The steady publication of original data builds genuine user trust. Readers and search systems learn to rely on the brand for accurate industry knowledge.

Platform-Specific Citation Behaviors

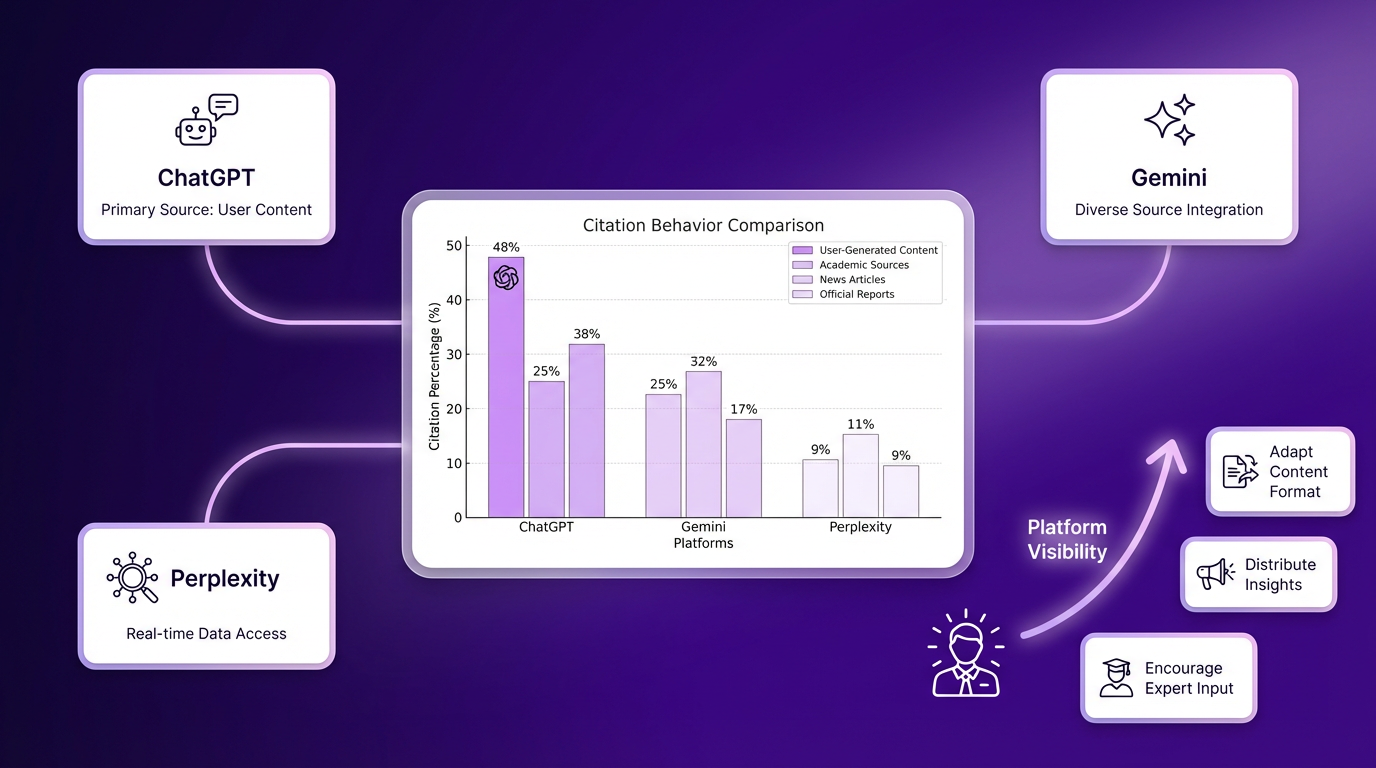

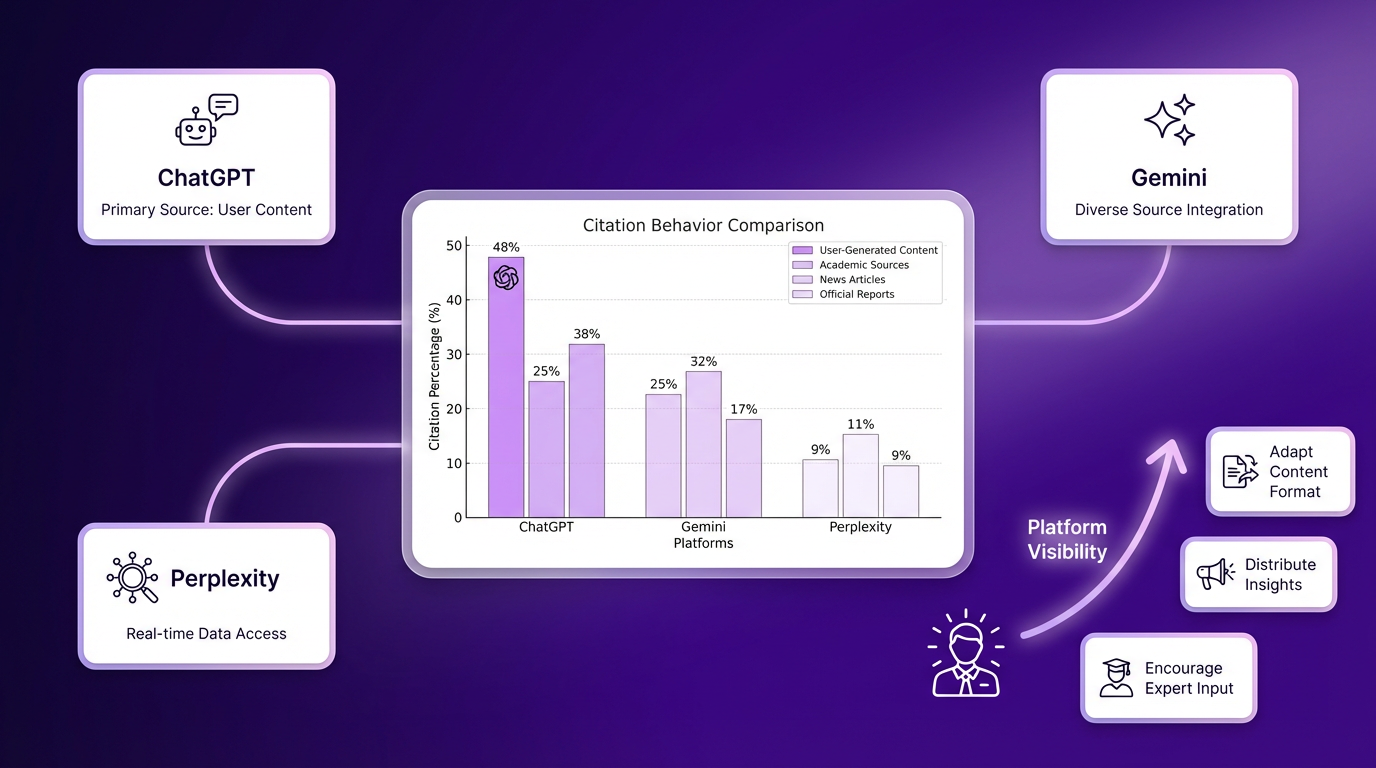

This reliance on brand accuracy varies across platforms. Different generative engines apply distinct logic when selecting their sources. An effective AI growth strategy accounts for these variations and matches content distribution to specific platform behaviors. ChatGPT frequently favors established media outlets and recognized institutional publishers. This platform prioritizes formal publications and rigorous editorial standards. In contrast, Perplexity relies on community validation to formulate its responses. It extracts answers from platforms where real people discuss their hands-on experiences.

A recent study of multiple generative systems revealed that Reddit ranks as the most-cited domain across ChatGPT, Gemini, and Perplexity. Furthermore, community and user-generated content platforms represent 48% of all citations in artificial intelligence search engines. Organizations achieve greater platform visibility when they distribute their insights across multiple channels. They adapt their content formats to satisfy these diverse retrieval systems. This adaptation requires companies to execute several distribution methods:

-

Publish formal reports on corporate domains to satisfy authoritative engines.

-

Share raw data points in relevant community forum discussions.

-

Encourage subject matter experts to post insights on professional networks.

Organizations must execute these steps with precision to ensure their data appears regardless of which engine a user consults.

Content Structure for Machine Readability

Data visibility across these different engines relies on proper technical formatting. Language models require specific technical structures to process and extract digital content efficiently. Without clear formatting, even the most valuable insights remain invisible to generative systems. Proper formatting directly influences the frequency of machine citations. Algorithms parse web pages and identify distinct content blocks and semantic relationships. If a website lacks a logical hierarchy, the extraction engine moves on to a better-structured source.

Companies that implement modern search optimization strategies understand that machine readability dictates online visibility. Publishers must organize their information so language models can identify facts instantly. This technical approach gives organizations greater control over how artificial intelligence platforms interpret their messaging. Clear headings, concise paragraphs, and semantic markup tags guide algorithms through the text. When systems encounter well-organized data, they extract it with greater confidence. The language model does not have to guess the context or meaning of the information. Instead, it pulls the exact answer the user requested.

To make information architecture accessible to language models, companies must adopt specific formatting practices. The following subsections detail three essential tactics for optimizing digital content for machine extraction and improving overall visibility. These methods ensure that generative engines recognize, process, and cite organizational data consistently.

Deep Topical Clusters

Interconnected content clusters demonstrate substantial topical depth to language models. When a website publishes multiple related articles that link to one another, it signals thorough subject knowledge. This broad subject coverage consistently outperforms isolated keyword optimization. In the past, companies ranked individual pages for single search terms. Today, generative engines look for domains that cover a discipline thoroughly.

Because language models assess the relationships between different concepts, deep topical clusters provide the exact context these systems need. According to recent industry analysis, topical authority explains 17% of citation variance in artificial intelligence engines, compared with only 4% for traditional domain authority. These detailed content networks give brands a decisive advantage. The interconnected architecture proves that the organization possesses genuine expertise.

Valid Schema Markup

Structured data translates human-readable text into machine-readable formats. Valid schema markup provides language models with an explicit map of a page’s contents. These schema elements categorize specific entities and verify relationships for extraction engines. When developers apply proper markup, the algorithm does not have to parse complex paragraphs to find the core facts.

The code delivers the exact data directly to the system. This technical formatting secures citations faster than an approach focused on traditional search rankings. Simple structural elements also play a major role in data extraction. Data-formatting researchers discovered that pages with data tables receive 2.5 times more citations than pages with only plain text. Structured information allows generative models to process comparisons and statistics without ambiguity.

Standardized Output Formats

Concise answer blocks facilitate smooth extraction by generative models. Large blocks of unbroken text confuse algorithms and reduce the likelihood of citation. Writers must format their insights into short, distinct paragraphs that address specific questions. Marketing automation tools help content teams standardize these section lengths across large digital libraries.

These platforms identify excessively long paragraphs and prompt editors to condense them. Text blocks within specific limits satisfy algorithmic processing constraints and deliver immediate value to the end user. Industry testing indicates that 40- to 60-word paragraphs serve as the optimal extraction length for artificial intelligence systems. This targeted length provides enough detail to be definitive while remaining concise enough for direct quotation. Standardized formatting guarantees that language models capture the idea cleanly.

Thirty-Day Playbook Execution

Proper content formatting requires a systematic implementation plan. Organizations should follow a structured thirty-day timeline to implement generative engine optimization tactics. A systematic approach helps content teams transition toward machine-readable formats without disrupting current publishing schedules. Companies should select niche topics in which the brand possesses proprietary data and strong expertise. Because language models favor specialized knowledge over broad observations, organizations need to identify the specific questions their audiences ask.

Once organizations identify these topics, content creators can publish structured anchor articles. These detailed guides act as the foundation for the organization’s AI growth strategy. Writers should organize information logically because sequential heading structures correlate with 2.8x higher citation likelihood. Proper formatting gives artificial intelligence engines confidence in the material.

Third-party validation reinforces content credibility and solidifies the brand’s position as a trusted source. Search platforms do not ignore external signals when evaluating data for accuracy. To build this authority, companies should follow a strict monthly schedule:

-

Select three specific niche topics that align with proprietary company data.

-

Publish detailed anchor articles and use clear semantic formatting and hierarchical headings.

-

Distribute raw data points from the articles to industry forums and professional networks.

-

Pitch the original research to relevant media outlets to earn external mentions.

These AI marketing strategies ensure that language models discover, verify, and cite the brand consistently.

Content Freshness for Visibility

Consistent citations require continuous updates after the initial execution phase. Data recency plays a critical role in securing generative citations from modern search platforms. Language models favor recently updated information over static legacy pages because users want current answers. If a platform provides outdated statistics, it loses user trust. Because artificial intelligence systems strive to deliver the most accurate responses, they actively seek fresh data sources.

Organizations build a reliable AI growth strategy by establishing consistent content refresh cycles. Companies cannot publish an article and abandon it. Algorithms recognize when a page remains dormant for years. Organizations should schedule quarterly reviews for all anchor articles to ensure that statistics and industry trends remain accurate. They should update data points, revise outdated claims, and add new insights to signal active maintenance.

This continuous updating process helps brands secure visibility across conversational interfaces. When companies maintain fresh content, they give search engines the confidence they need to cite their domains. Research shows that 83% of commercial query citations come from pages updated within a year. Outdated content simply disappears from machine-generated responses. Regular audits require dedicated resources, but the investment protects digital visibility. Organizations that treat content as a living asset capture more traffic than competitors that rely on aging libraries.

Analytics Infrastructure Transition

Organizations must measure how this living content captures traffic. Companies should transition their measurement frameworks from click-based metrics to citation-centric tracking. Traditional search reporting focused on organic clicks and keyword rankings. However, generative interfaces summarize information directly, reducing the absolute number of visitors who click through to websites. If organizations rely solely on legacy performance marketing indicators, they will not understand their true digital reach.

Companies use tools to evaluate generative visibility and track brand mentions within machine-generated responses. Analysts monitor how often language models reference the company and which specific data points they extract. Artificial intelligence citations correlate strongly with traditional visibility. Recent industry analysis showed that ChatGPT citations dropped 27.8%, paralleling a 26.7% organic traffic decline for affected sites. Analysts track these mentions to get a clear picture of brand authority in the current search environment.

Companies track referral revenue from conversational interfaces to measure the financial impact of their AI marketing strategies. Marketing automation platforms help teams attribute conversions to specific generative engines. Teams adapt their reporting dashboards to display verified leads generated by artificial intelligence citations alongside traditional channels. This updated infrastructure allows leadership to see the value of structuring content for machine readability. Organizations that adapt their analytics understand exactly how language models drive business growth.

Conclusion

This framework leads to a clear understanding of business growth. To summarize the major points, brand visibility in machine-generated responses requires pristine content structure and irrefutable topical authority. Organizations must prioritize clear data formatting and external validation because traditional algorithmic manipulation no longer yields results. Thorough AI marketing strategies ensure that language models recognize and extract company content accurately. Going forward, businesses that restructure their digital assets will improve visibility in conversational interfaces. The next step is to audit current content libraries with AI visibility tracking tools to verify machine readability. Shifting measurement frameworks toward citation tracking secures an advantage in the digital landscape.