Introduction

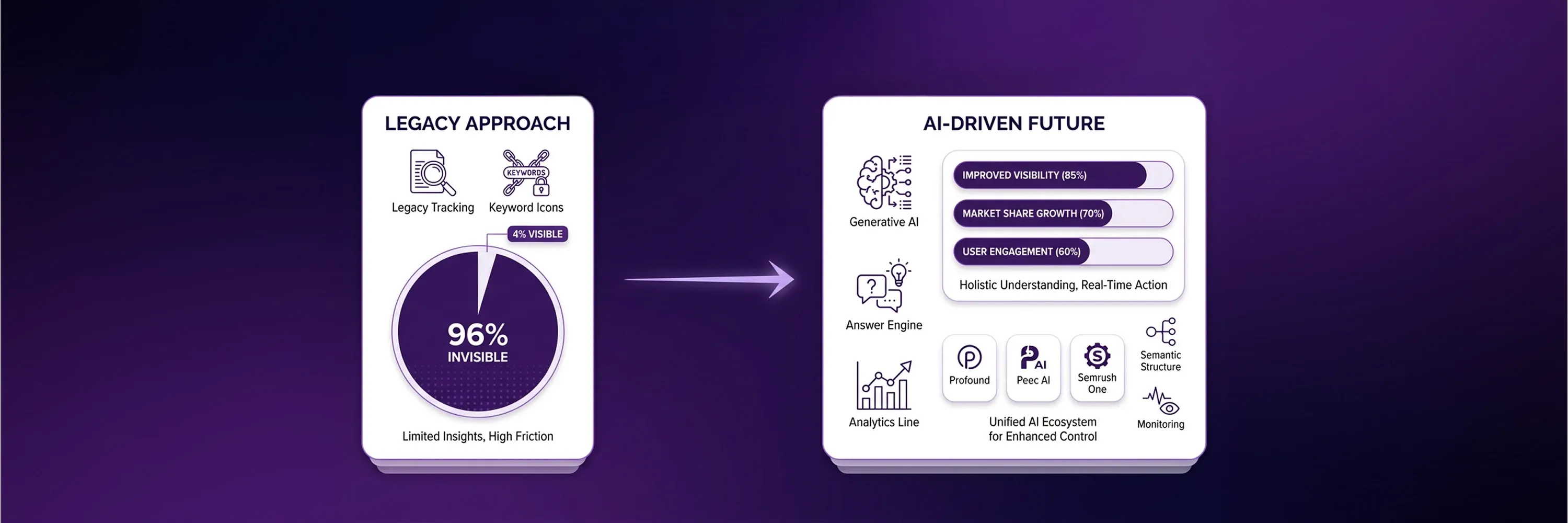

Generative artificial intelligence engines have fundamentally altered how people access information online. Historically, search engines functioned as directories and required users to evaluate multiple blue links to find answers. Today, platforms like ChatGPT and Gemini synthesize data across the web and provide direct, conversational responses. Because of this structural change, traditional ranking metrics no longer guarantee visibility. The digital landscape now presents an environment where large language models dictate brand discovery.

Legacy software configurations make brands invisible in these AI-generated answers. A recent analysis of major artificial intelligence systems found that AI referral traffic represents 1.08% of total website traffic currently, yet this traffic shows massive growth potential. Businesses that fail to adapt measurement and optimization approaches forfeit the opportunity to capture high-intent users who prefer direct answers over traditional search results.

This new reality requires reevaluating existing SEO optimization tools and understanding how machine learning models process and extract website data.

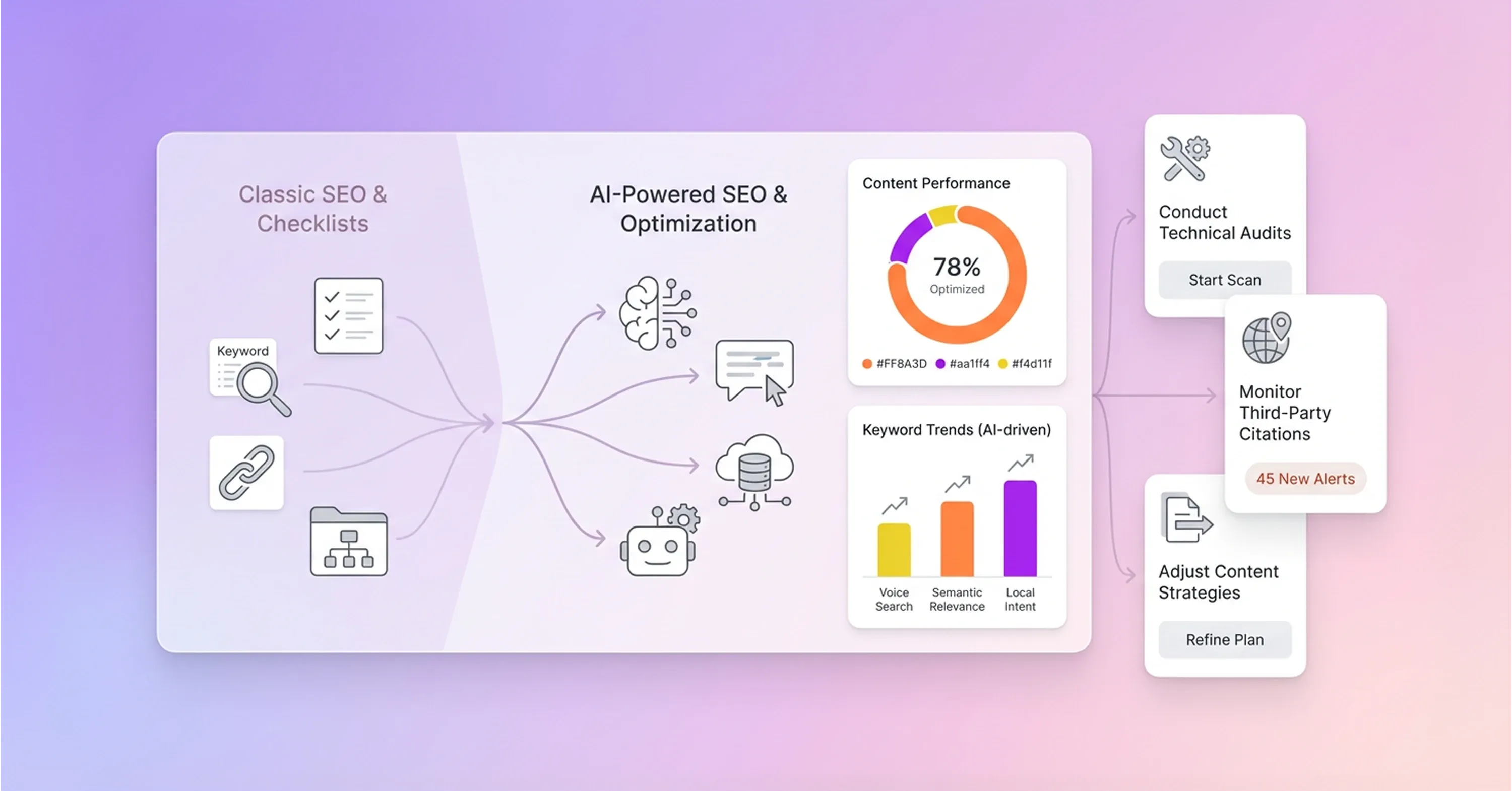

Shift to AI Tool Workflows

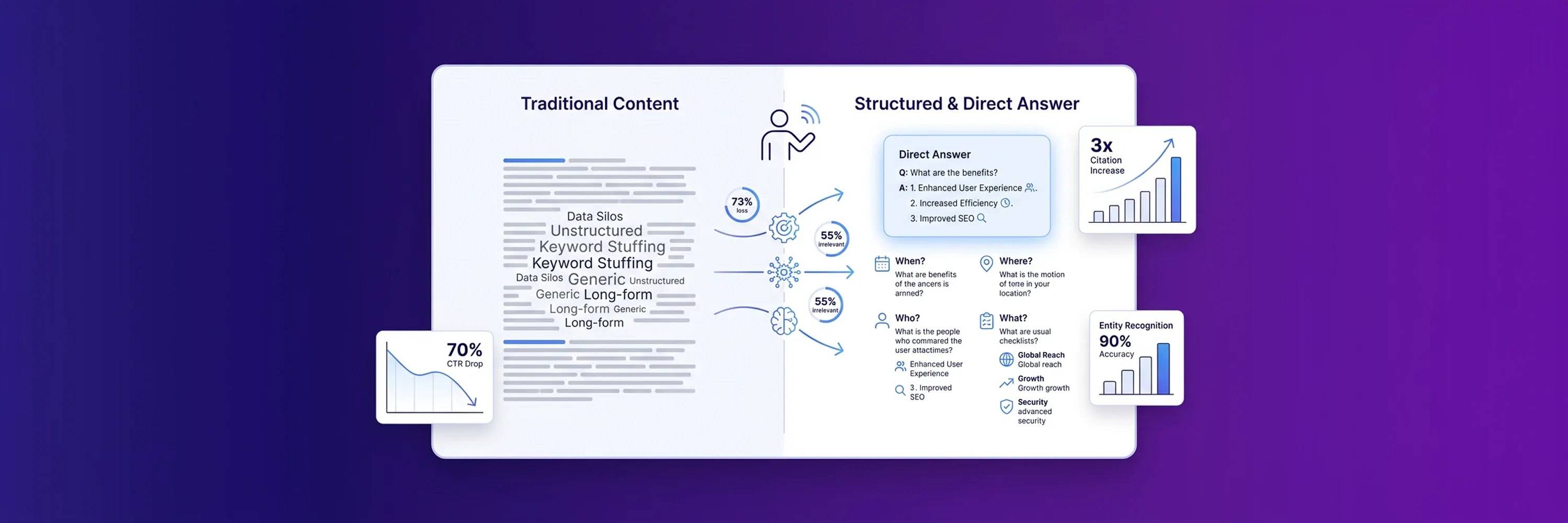

Large language models compile answers from multiple sources instead of sending users through a list of blue links. This fundamental difference creates a content gap for websites that still rely on legacy metrics. Old measurement tactics do not capture accurate data in new environments, so different methods are required to measure digital visibility. According to Conductor, artificial intelligence overviews account for 25.11% of Google searches across their analyzed dataset. This data shows that users now expect direct answers rather than comparing multiple search results. Consequently, traditional websites lose traffic when they rely solely on legacy metrics.

Existing SEO tools need to be adapted for use in generative platforms and AI-driven search environments. Modern metrics avoid keyword positions and measure how often models extract and cite brand entities. Reconfigured software stacks help maintain market share in this new environment. Websites often experience traffic drops when they ignore how conversational interfaces process data. Modern SEO best practices focus on LLM readability rather than exact-match phrases. Auditing platforms can evaluate content structure and entity relationships. This strategic shift ensures that artificial intelligence engines an process and cite the information more reliably.

Technical Audits for LLM Extractability

Large language models rely on clear structure to interpret and reuse website content accurately. If websites lack proper structure, then these models consume too much processing power to decode the text. Distinct and digestible paragraphs allow algorithms to capture context without confusion. Foundational auditing software helps validate schema markup and improves machine readability. For example, the Screaming Frog SEO Spider identifies broken links and duplicate content. These SEO optimization tools can crawl for specific data points that large language models prioritize.

Because generative models rely on organized data, proper heading structures matter more than traditional keyword density. Clear headings provide the necessary energy for models to navigate through complex topics. Several technical elements and on-page SEO tools ensure that websites remain extractable:

-

Nested heading structures create logical content hierarchies.

-

Valid schema markup categorizes entities and their relationships.

-

Clean code removes unnecessary scripts and improves load times.

-

Semantic HTML tags define the purpose of different page sections.

Structured data directly influences how often models cite a specific brand. Research shows that pages with thorough schema markup are 36% more likely to appear in artificial intelligence citations.

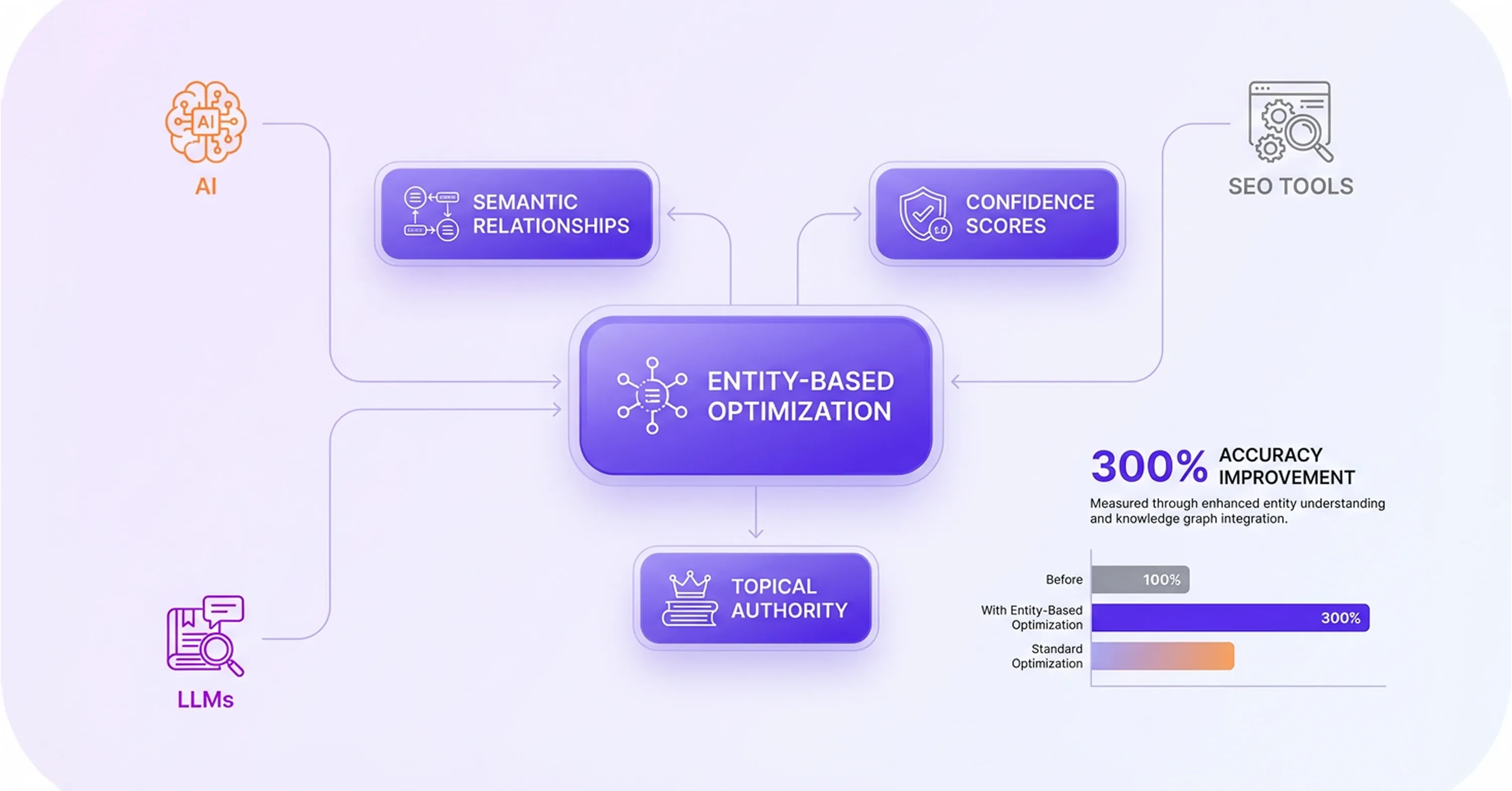

Entity Optimization and Semantic Tools

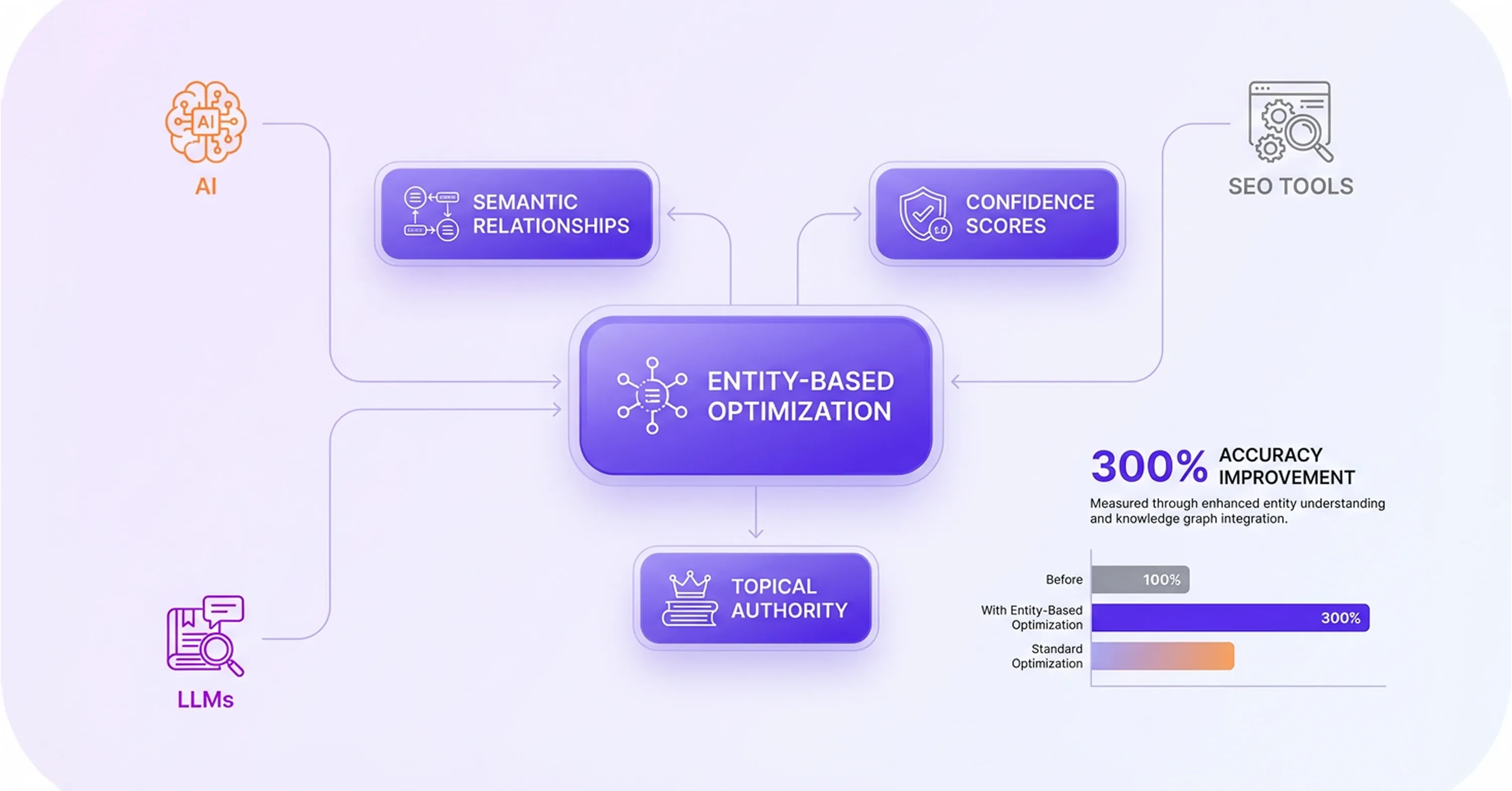

After these technical adjustments make content accessible to conversational interfaces, semantic analysis platforms focus on entity-based optimization rather than exact match phrases. These platforms map relationships between different subjects to build a thorough knowledge network. Specialized SEO software tools identify the precise entities that define an industry. Interconnected concepts establish topical authority and secure citations in generative engines. Isolated keywords fail to provide the deep context that models require. Modern search optimization strategies prioritize original research and thorough topic coverage.

Entities act as the foundational building blocks that artificial intelligence uses to verify facts. Research demonstrates that large language models backed by knowledge graphs achieve 300% higher accuracy compared to unstructured data approaches. This data indicates that models prefer sources that clearly define relationships between concepts. Platforms like InLinks help structure content around these semantic relationships. These platforms analyze the intensity of entity connections and recommend improvements for better machine comprehension. Models construct their answers based on confidence scores assigned to different information sources. Consistent association with relevant industry concepts increases these confidence scores. Connected ideas signal expertise to systems like ChatGPT and Gemini. These systems scan the web for authoritative sources that answer user queries thoroughly. Semantic optimization ensures that algorithms recognize a brand as a trusted entity within its specific niche. In this way, it improves both machine comprehension and citation potential.

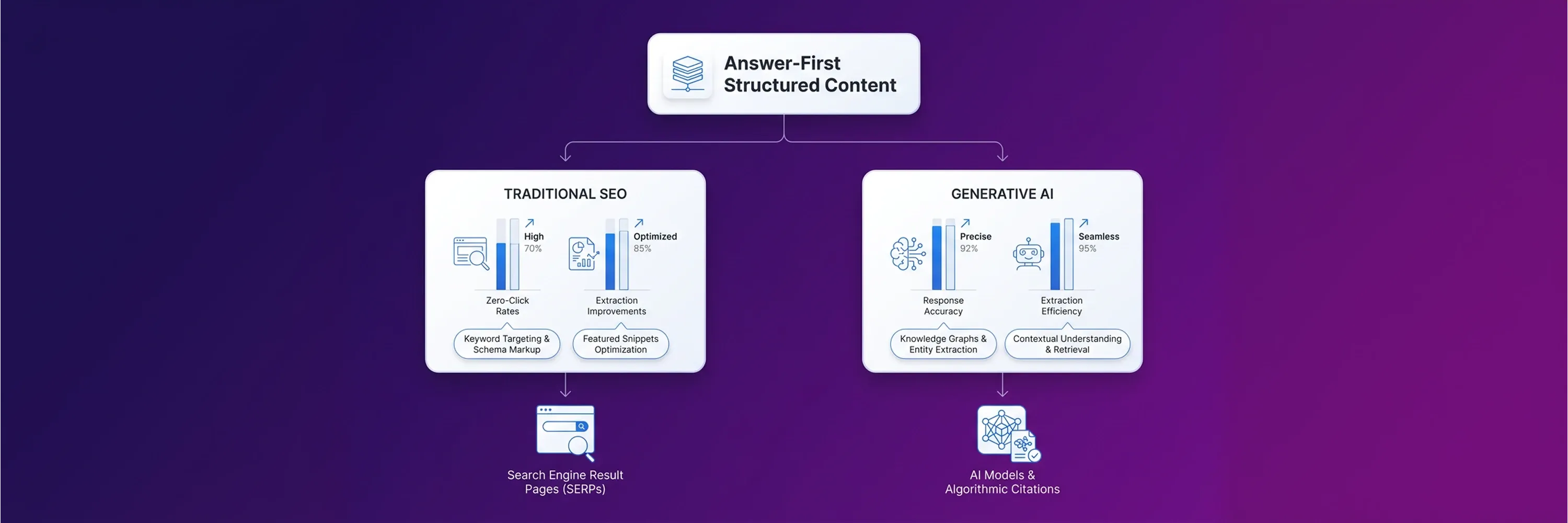

Content Optimization for AI Answers

Historically, authors have focused on achieving conventional search rankings rather than direct extraction. However, this approach is ineffective in the modern digital environment. If content creators want to secure citations in conversational interfaces, they must format their text for direct extraction. This new shift requires authors to abandon keyword stuffing and prioritize factual density. Modern on-page SEO tools guide writers to structure their articles around direct answers rather than long-winded introductions. Large Language Models (LLMs) process information chronologically, so authors place important facts at the top of an article to ensure better machine comprehension.

Writers adapt their writing processes to satisfy these new algorithmic preferences and maintain visibility in artificial intelligence search results. On-page SEO tools analyze top-ranking entities and suggest semantic structures that build topical authority. This guidance helps creators eliminate filler text and focus on delivering immediate value. The software acts as an editor that enforces machine-friendly formatting rules across the entire website. Data shows that users of specific platforms increase their visibility when they adjust their formatting habits. Recent internal data reveals that Surfer SEO users grew their search and artificial intelligence visibility by an average of 423% in early 2026. This growth happens because the software forces writers to organize ideas logically and concisely. The following subsections detail specific content structures that make information highly extractable.

Content Structure for Direct Extraction

Clear paragraph and heading structure makes information easier for language models to extract. Content creators achieve this when they place their core arguments and data points at the beginning of their articles. This approach aligns with how human readers prefer to consume information. When writers use specialized software, they learn to avoid burying the lead beneath lengthy background information. Algorithms prioritize the top sections of web pages when they construct their answers. For instance, researcher Kevin Indig analyzed 1.2 million ChatGPT interactions and found that 44.2% of citations originate from the first 30% of an article. Authors can increase their chances of being referenced as a source by placing their most valuable insights in the introduction and initial body paragraphs. Software platforms can help them to apply definitive language and concise formatting throughout their drafts.

Platform Configuration for Writers

Once writers have included their most valuable insights in the initial body paragraphs, software platforms can help them to apply definitive language and concise formatting throughout their drafts. Modern platforms evaluate the assertiveness of the text and flag tentative phrases that confuse machine learning models. Words like "might" or "possibly" weaken the factual integrity of a sentence, and this causes algorithms to look elsewhere for more confident sources. These evaluation platforms help authors develop stronger, more authoritative voices. Proper platform configuration trains content teams to state facts plainly. Recent researchers confirmed that using definitive language makes citations nearly twice as likely in artificial intelligence overviews. Algorithms inherently trust statements that leave no room for ambiguity. Publishers ensure their web pages remain visible when they rely on software to enforce this linguistic standard.

Algorithm Readability Improvements

Software enforces this linguistic standard to keep web pages visible, and writers increase the likelihood of model extraction when they incorporate specific data points and structured elements. Modern content marketing strategies emphasize empirical evidence because algorithms use statistics to verify the accuracy of their generated answers. When writers include numbers, percentages, and expert quotes in their paragraphs, they give the artificial intelligence engines concrete material to reference. This shift toward data-driven writing rewards publishers who conduct original research. Evidence proves that machine comprehension improves when text includes verifiable facts. Industry researchers demonstrated that adding statistics to content lifted artificial intelligence visibility by 37%, while including direct quotations improved visibility by 30%. Content creators embed these empirical elements naturally within their sentences. This practice guarantees that conversational interfaces recognize the website as a primary, authoritative source of information.

Third-Party Citation Measurement

Even when conversational interfaces recognize a website as an authoritative source, the majority of brand mentions in artificial intelligence responses come from external pages rather than the official company website. Large language models prefer to pull information from community discussions, forums, and independent review platforms to provide unbiased answers. Because of this behavior, off-site mention monitoring serves as the new equivalent of backlink analysis. Publishers approach this challenge carefully because it reveals how consumers talk about their products in public spaces. Companies rely on SEO software tools to map where artificial intelligence engines find their training data. For example, researchers discovered that Reddit accounts for 46.7% of citations in Perplexity's top ten results, which is significantly more than ChatGPT. Similarly, industry analysts revealed that Gartner, G2, and Capterra account for 88% of citations among review platforms in Google's artificial intelligence overviews. These teams then monitor discussions on social platforms and review sites with specialized SEO tools. Companies follow a specific process to track these external signals accurately.

-

Publishers identify the top three independent review platforms relevant to their specific industry.

-

They configure mention tracking software to monitor the brand name across these identified platforms.

-

They extract sentiment data from these mentions to understand public perception.

-

They compare the frequency of these third-party mentions against direct competitors.

This chronological process ensures that companies capture the exact data points that feed generative algorithms. Brands maintain a clear picture of their digital footprint when they measure these external citations systematically.

ROI Measurement for AI Search

While brands measure their external citations, specialized visibility trackers monitor citation frequency and competitor placement across major generative engines. These platforms solve the critical gap of measuring Answer Engine Optimization (AEO) success and provide clear performance indicators. Historically, companies struggled to calculate their Return on Investment (ROI) because traditional analytics software could not track conversational interfaces. Companies abandon outdated reporting dashboards and adopt systems that specifically analyze large language model outputs. Modern SEO optimization tools simulate user queries and record how often a brand appears in the generated responses. When publishers integrate these trackers into their search engine optimization workflows, they gain clear visibility into their market share. The data these platforms provide justifies the budget spent on semantic restructuring and entity relationship building. For example, enterprise tracking platform Profound reported that clients experience a 25% to 40% lift in artificial intelligence share-of-voice within 60 days of implementation. SEO software tools highlight the volatility of these new search environments. Because algorithms update continuously, brand visibility fluctuates wildly from month to month. Recent measurements indicate that citation drift ranges from 40% to 60% monthly across major artificial intelligence platforms. Companies monitor this volatility to understand their market position. Consistent measurement allows companies to adjust their content strategies rapidly and defend their digital territory against competitors.

Conclusion

Brands maintain visibility in AI-driven search by aligning technical structure, content clarity, and authority signals. Traditional ranking metrics will continue to evolve, and structured data and third-party validation will become the standard for AI discoverability. As generative models become the primary method for online research, companies that prioritize machine readability will gain market share and establish authority. Auditing existing SEO optimization tools ensures that these systems measure entity authority and external citations instead of keyword positions. Adapting SEO workflows to entity strength, citation frequency, and extractability helps brands compete across search environments.