Introduction

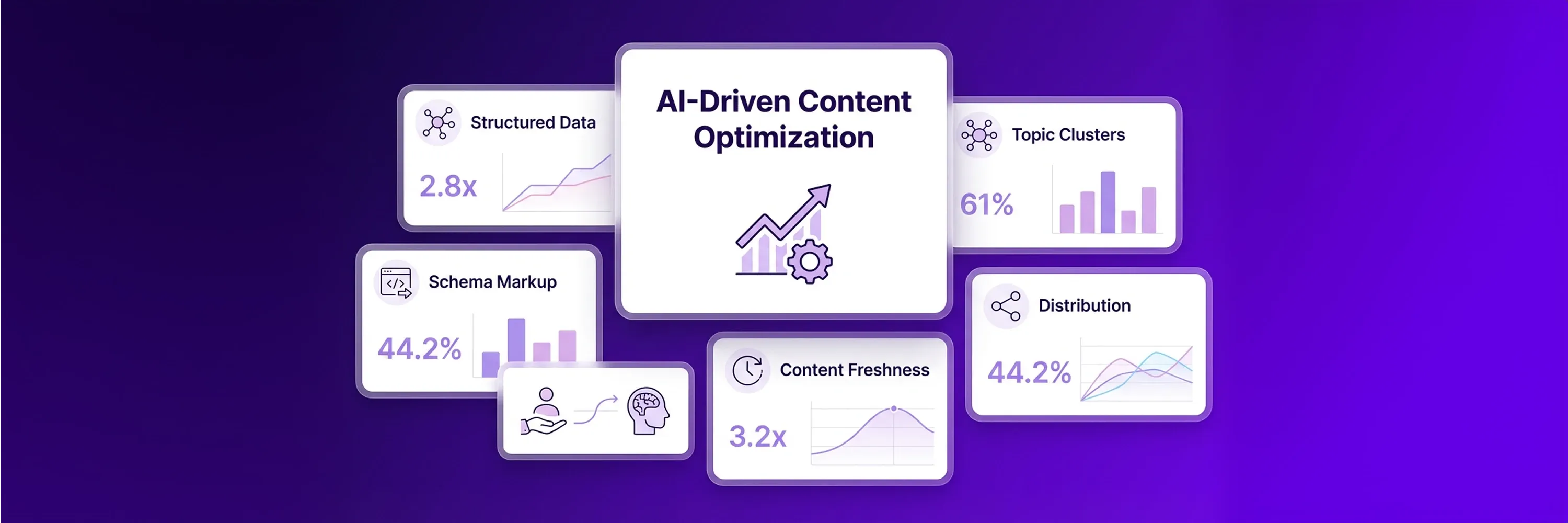

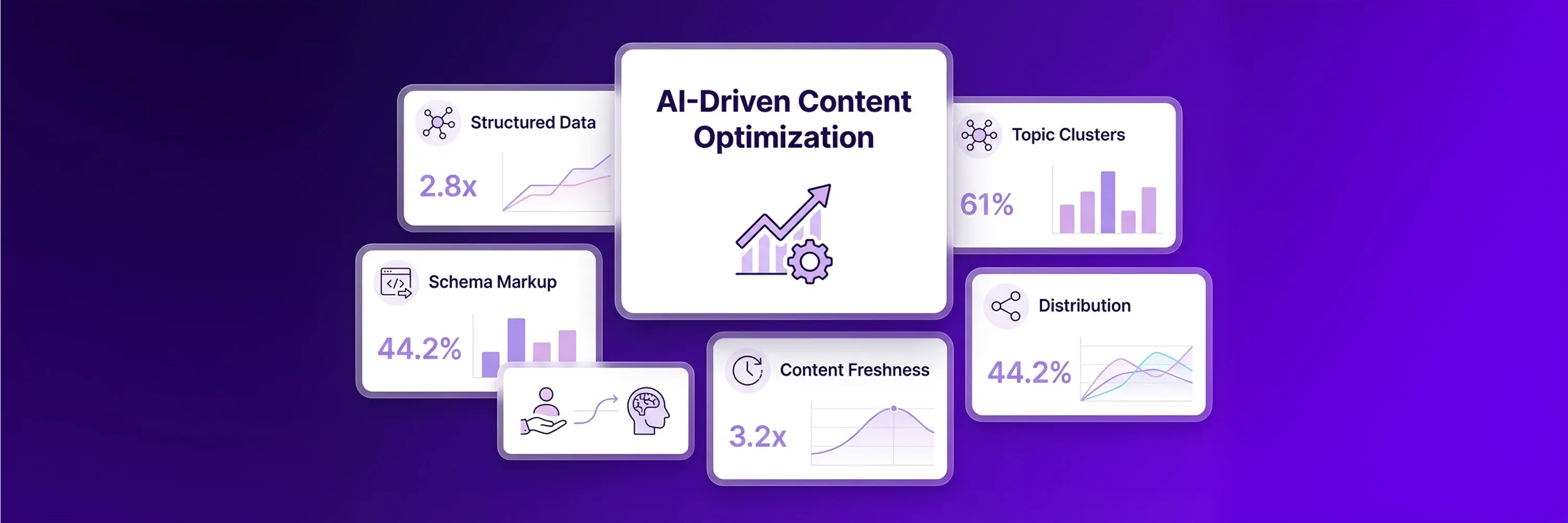

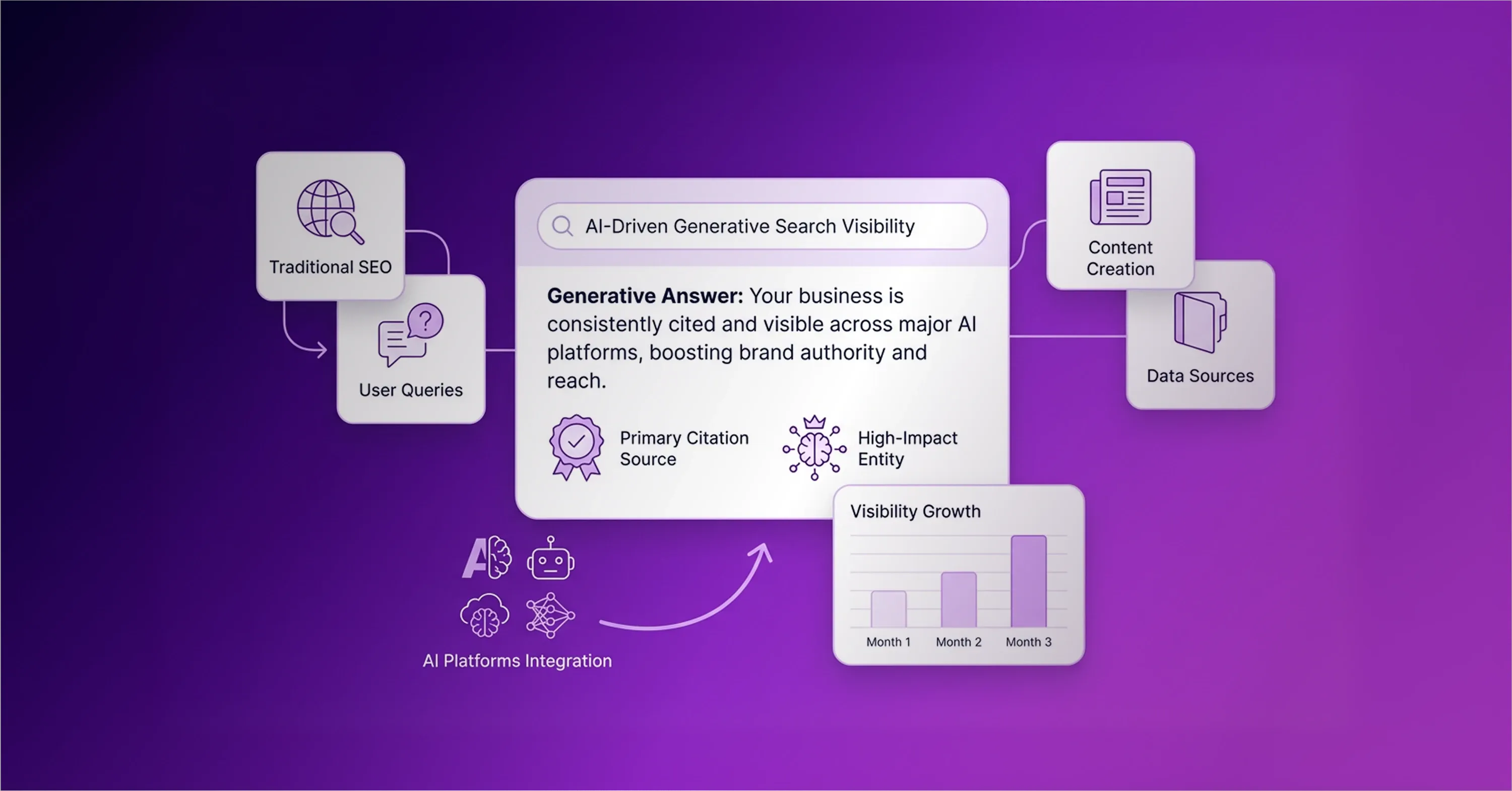

Search algorithms historically indexed content and provided directories of links. Today, generative artificial intelligence systems process data across the web to generate direct answers that satisfy queries immediately. This structural shift requires publishers to optimize for AI model extraction to maintain and scale digital visibility. Traditional keyword density fails because large language models evaluate the clarity, structure, and specificity of extracted information. Queries that feature Google AI Overviews have decreased organic clicks by 61% since mid-2024. Organizations navigate this transition by adopting specific, AI-friendly content structures alongside standard optimization efforts. They maintain modern search visibility by creating authoritative content and distributing it across trusted platforms. Publishers implement content generation with AI successfully when they integrate structured data, bulleted lists, and precise definitions into their workflows.

Shift From Traditional Search To AI Citations

The transition from standard search engine results to generative answers changes how websites approach visibility. For decades, websites relied on keyword density to climb traditional search rankings. They peppered exact-match phrases throughout their articles and built large backlink profiles. Now, large language models synthesize information differently. They read paragraphs for context and ignore repeated phrases. This shift means traditional search engine results pages and generative answers reward different content structures.

Data confirms this division. Rankings and artificial intelligence citations operate as separate outcomes, and success in both areas requires different optimization strategies. Professionals once believed that high search placement meant high visibility. Today, a top spot in traditional search does not secure a mention in a generative response. A recent industry analysis shows that only 38% of pages that appear in AI Overviews also rank in Google's top 10 for the same query.

Companies capture this lost traffic by using automated software to reformat their existing articles. They strip away filler words and replace them with factual statements. This structural adjustment helps extraction models understand the text with certainty. Proper content generation with AI focuses on building machine-readable data blocks instead of generic text. Once writers understand this shift toward algorithmic extraction, they adjust how they format their long-form assets.

Bridge Gap Between Human And Machine Readability

Long-form articles require a balance between human-readable depth and machine-readable structure. Human readers enjoy storytelling, historical context, and smooth narratives that build understanding slowly. Artificial intelligence models struggle to extract facts from dense paragraphs. These models scan documents to find concrete answers to user queries. If an extraction algorithm encounters a wall of text, it may abandon the page and evaluate a more structured source.

Writers solve this problem by designing web pages specifically for algorithmic extraction. They place the most important definitions at the top of the page because extraction models prioritize early information. Research indicates that the first 30% of content text accounts for 44.2% of all LLM citations. Professionals use automated content creation to reorganize their existing articles for these models. An SEO checker for ChatGPT evaluates how well extraction algorithms can process this reorganized text. Writers bridge the readability gap by applying precise formatting rules:

-

Start sections with direct definitions instead of lengthy introductions.

-

Break complex concepts into factual statements.

-

Format questions and answers as distinct text blocks.

-

Remove lengthy transitions that dilute factual density.

-

Bold key terminology to signal importance to parsing algorithms.

Text with these elements creates a solid foundation for a broader organizational framework.

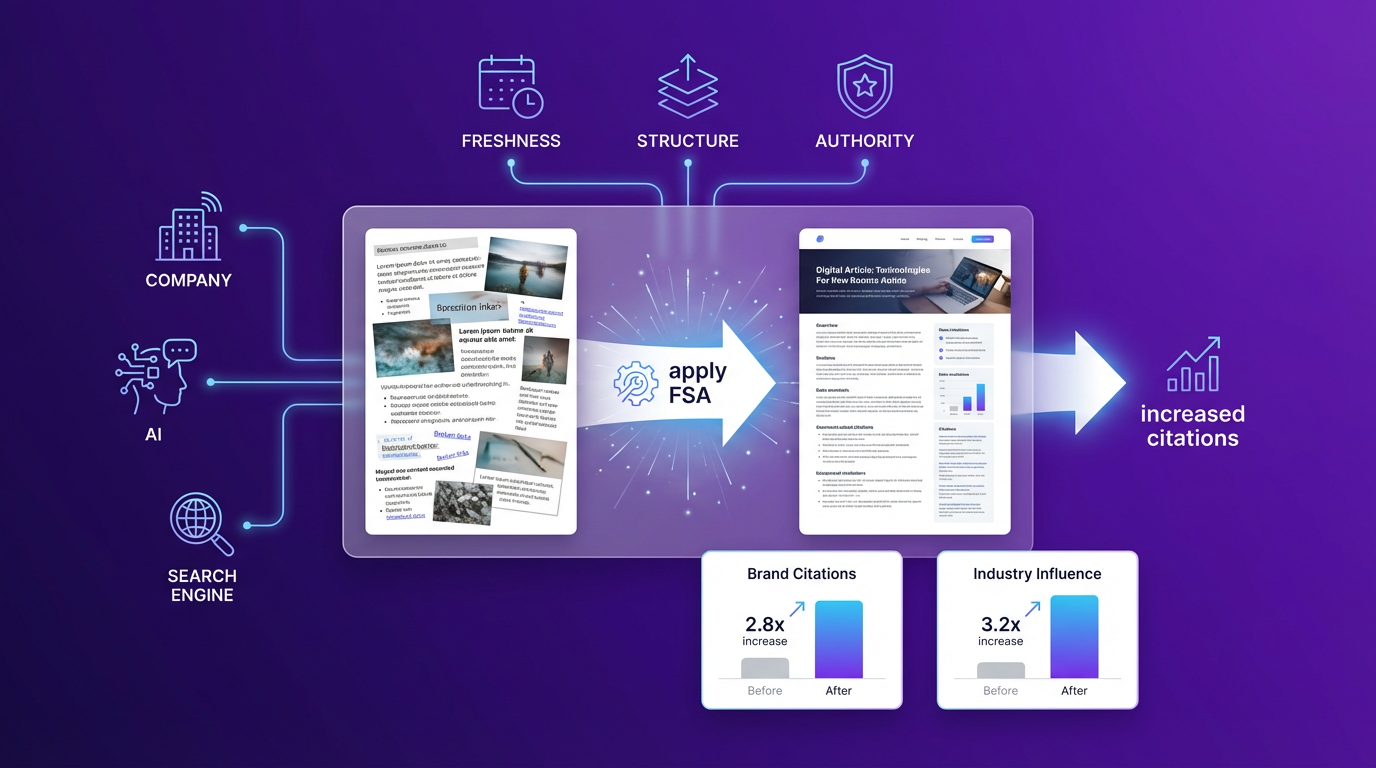

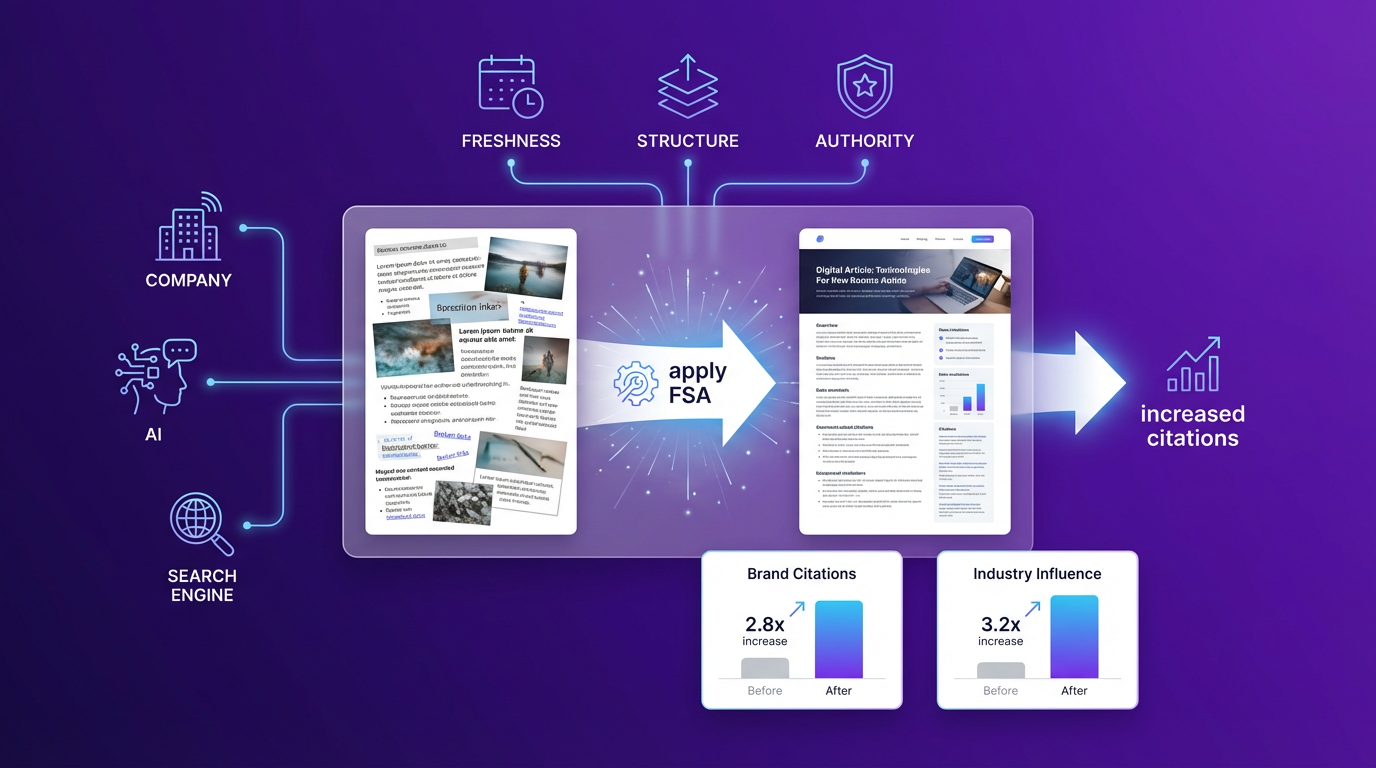

Restructure Assets With FSA Framework

The Freshness, Structure, and Authority (FSA) framework offers a systematic approach to formatting digital assets for machine extraction. Companies hold large volumes of human-generated content in their archives. However, generative engines ignore this established information if its format prevents easy parsing. This framework addresses that visibility problem. Teams apply this methodology to transform older articles into organized citation blocks. They evaluate their top-performing pages and restructure the text into clearly defined segments that answer specific user questions.

When teams implement this framework, pages with sequential headings and rich schema markup achieve 2.8x higher citation rates in generative answers. Content generation with AI accelerates this restructuring process. Teams feed their proprietary research into specialized SEO optimization tools for LLMs, which process the text into reliable data points. These tools preserve the original human expertise while upgrading formatting to meet strict algorithmic requirements. Businesses use artificial intelligence to adapt existing knowledge without creating entirely new drafts. The three core pillars of this framework help businesses earn generative citations.

Prioritize Content Freshness

Search engines prefer recently updated articles because language models seek current information to answer user queries. A five-year-old article loses value for extraction algorithms, even if the underlying concepts remain accurate. Artificial intelligence systems evaluate timestamps to determine relevance. Creators who maintain aggressive update schedules secure more visibility in generative responses. A recent data analysis reveals that content published within the last six months receives 3.2x more artificial intelligence citations than older pages. Companies use automated content creation to accelerate these update cycles. They review legacy posts, add recent statistics, and revise outdated terminology. This continuous refresh cycle signals active maintenance to search algorithms. When a model detects frequent updates, it increases trust in the source and cites it more often. Once the information is current, the algorithm evaluates how the page organizes that data.

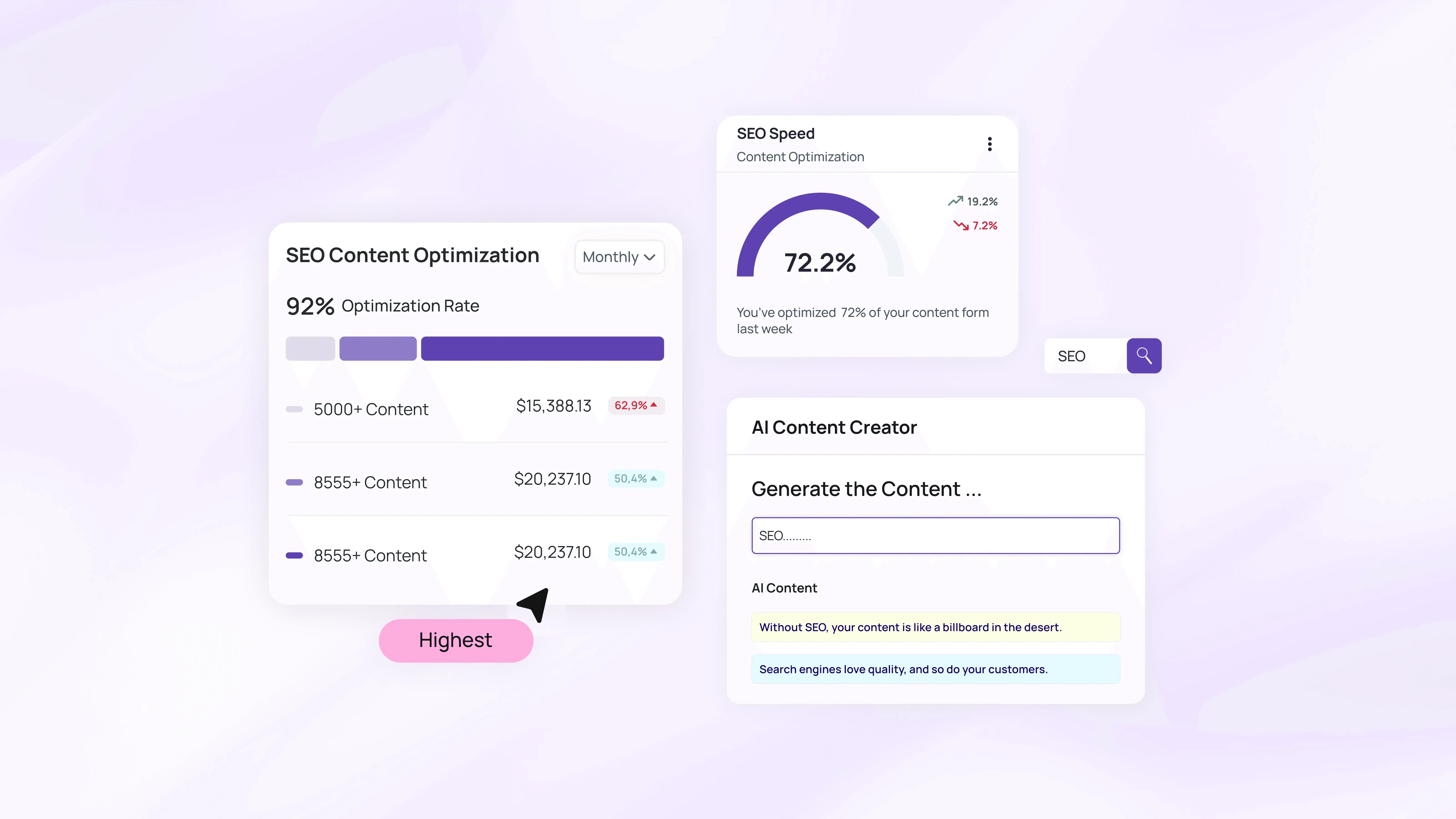

Optimize Structure With AI Content Tools

Extraction models rely on visual and technical design to identify factual answers within long web pages. A paragraph that answers a question directly provides more value to an algorithm than a wandering narrative. Writers improve machine readability by formatting text into strict question-and-answer blocks. These dedicated blocks help the algorithm isolate the specific sentence it needs. Technical design operates behind the scenes to guide the extraction process. According to recent testing, Frequently Asked Questions schema provides the most effective structure to increase citation probability and extraction rates. Professionals deploy this markup to label accurate information clearly and categorize complex topics. The algorithm reads these structural labels and pulls the corresponding text into generative responses. After structuring the page properly, writers can demonstrate their overall expertise on the subject.

Establish Topic Authority

Generative search systems measure a website’s overall expertise before citing its individual pages. A single well-written article cannot overcome a weak domain reputation. Search algorithms evaluate the entire site to verify that the creator understands the industry. Writers signal this expertise by maintaining a dense concentration of verifiable facts across multiple related articles. They focus on building complete topic clusters instead of publishing scattered opinions. Industry research shows that domain authority remains the single strongest predictor of artificial intelligence citations, with a SHAP value of 0.63 in recent studies. Editors rely on AI content tools to map these topic clusters systematically. These applications analyze content databases and identify missing information. When writers fill these gaps with valid data, extraction models recognize the domain as a primary source. This authority supports long-term visibility, while software platforms help format human expertise for extraction.

Optimize Content Generation With AI For Human Expertise

Software platforms format data better than they replace human knowledge. Algorithms alone produce low-value articles that fail to earn trust from readers and search engines. Generative models synthesize existing information, but they cannot conduct original research or form authentic opinions. Writers reject generic mass-produced text and use automated content creation to structure proprietary research and unique insights into machine-readable blocks. This targeted approach separates valuable human expertise from repetitive web copy. A recent search engine study reveals that human-written content ranks in position 1 eight times more often than purely machine-generated text.

Writers supply original thoughts and fresh data points to the software first to secure these top positions. Effective content generation with AI requires the technology to act strictly as a formatting assistant. The algorithm aligns those original thoughts with schema requirements and extraction rules. This balance helps maintain content quality and makes expert insights accessible to large language models. The software handles structural formatting so human writers can focus on unique industry perspectives. Human writers optimize these internal assets and push their formatted knowledge into external communities.

Distribute Knowledge Across High-Trust Platforms

Machine learning models extract information from external community networks just as heavily as they scan proprietary websites. Company blogs do not guarantee that a generative engine will find and cite the information. Organizations build an integrated strategy for artificial intelligence visibility to push their core content into external networks. They use AI content tools to rewrite long-form articles into platform-specific posts for industry forums and professional social networks. These software applications automate the adaptation process and save hours of manual editing time.

External platforms possess high domain authority and heavily influence how language models learn about specific topics. When multiple external communities discuss the same verified data, the extraction algorithm treats that information as a validated fact rather than just a brand opinion. Data confirms this reliance on community platforms. Analysis of artificial intelligence responses shows that Reddit accounts for 40.2% of all citations across major generative engines. Furthermore, LinkedIn serves as the primary citation source for business-to-business professional content and technical queries. Content creators adapt formatted data blocks into conversational Reddit threads and structured LinkedIn articles. This widespread distribution trains language models to associate the source with specific industry answers. This distribution network requires a system for keeping all assets current.

Implement Hybrid Human-AI Audit Workflow

A systematic review process transforms outdated articles into fresh resources that receive citations from both traditional search algorithms and generative engines. Older pages often regain relevance when authors reformat them. Writers execute artificial intelligence strategies to organize their auditing procedures effectively. Recent industry surveys report that 64% of professionals use human-led, AI-assisted workflows for their production processes. This hybrid approach combines human subject knowledge with software processing speed. Content generation with AI streamlines the revision phase, and human editors verify the accuracy of the newly formatted information. A specific sequence updates digital assets and captures lost search traffic:

-

Writers identify web pages that lost organic traffic over the past twelve months.

-

They extract core concepts and feed them into automated content creation platforms.

-

Software prompts reformat dense paragraphs into strict question-and-answer blocks.

-

Editors review the generated blocks to verify factual accuracy and maintain brand voice.

-

Editors publish the revised assets with updated schema markup and current publication dates.

This sequence keeps historical knowledge accessible. This structured workflow helps businesses maintain search visibility as technologies continue to evolve.

Conclusion

Generative search algorithms reward the distribution of authoritative content across trusted platforms. Artificial intelligence search engines prioritize synthesized direct answers over traditional ranked web pages. Content generation with AI requires experts to format proprietary human knowledge into machine-readable citation blocks instead of generic text. Generative engine optimization strategy incorporates proper schema markup and semantic relationships to sustain this visibility in the future. A complete audit of top-performing long-form assets helps restructure content into highly extractable formats and capture the emerging AI citation share.