Introduction

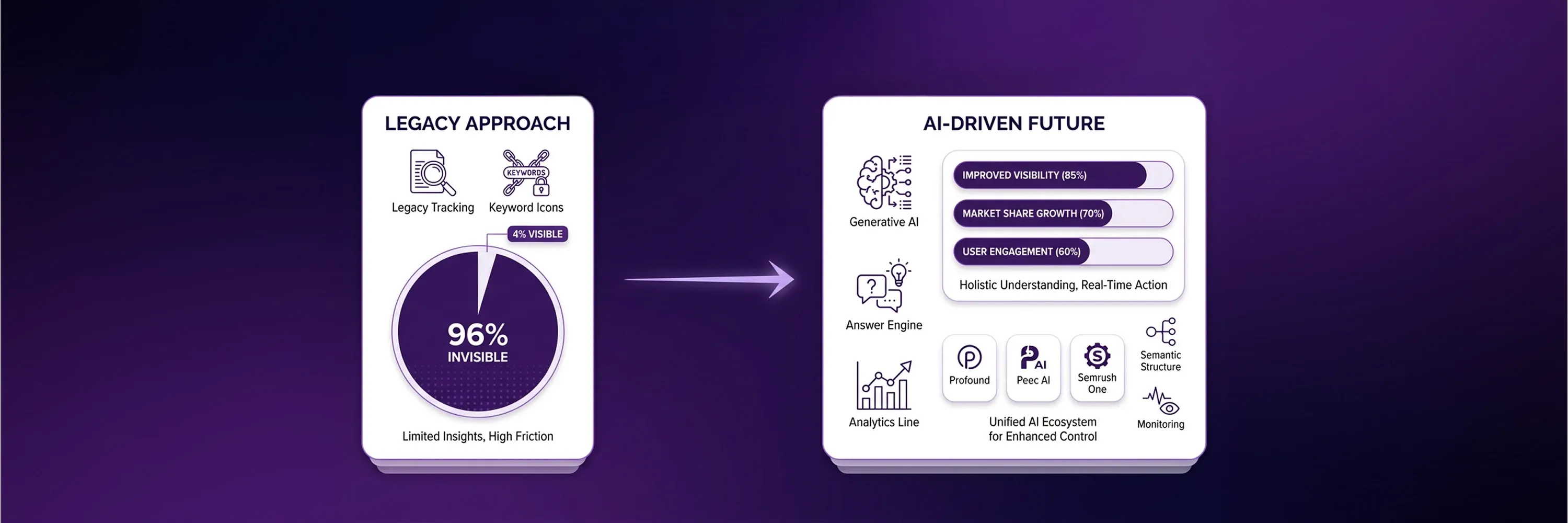

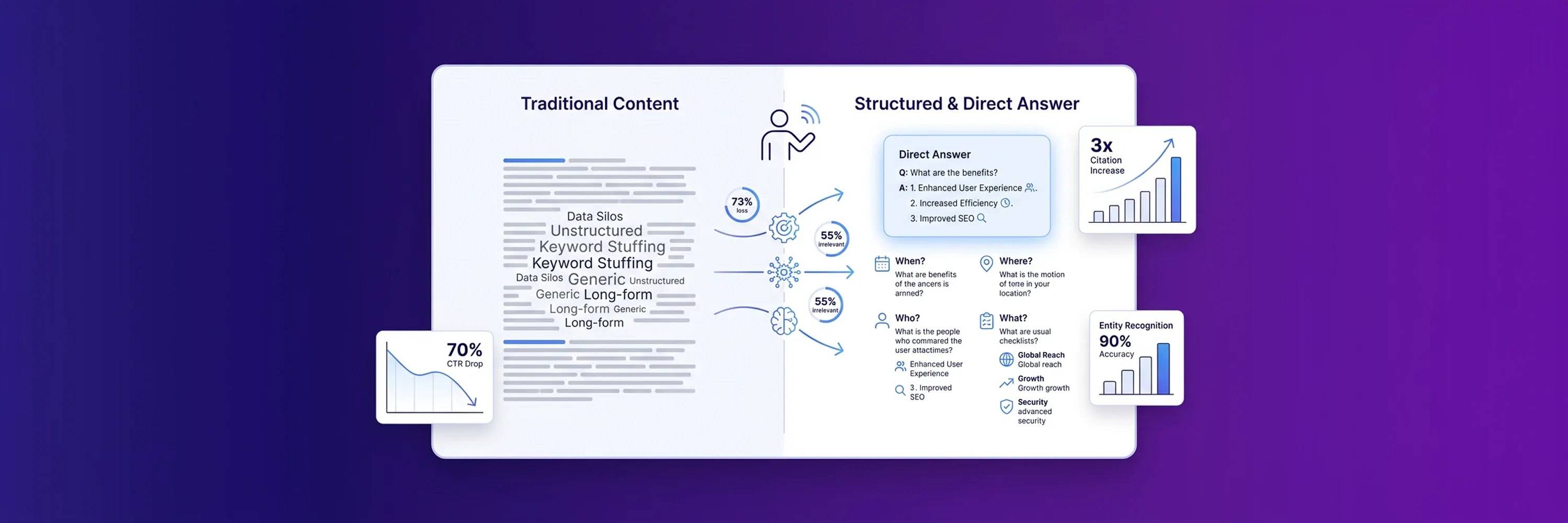

The search environment changed from predictable keyword rankings to AI-synthesized responses. Traditional search engines previously provided users with a straightforward list of links, but modern generative engines now directly answer user queries and do not require visits to external websites. This shift makes traditional position tracking obsolete because users find their required information instantly. According to a Seer Interactive September 2025 study, organic click-through rates for queries with AI Overviews dropped by 61%, from 1.76% to 0.61%. Because organic traffic declines, businesses struggle to measure their actual market presence with legacy analytics.

This zero-click reality requires companies to adopt a specialized mechanism that monitors brand citations and model share within generative engines. Companies no longer chase a single top spot on a static results page, and they must instead understand how frequently and favorably large language models cite their domains. Visibility measurement across probabilistic systems demands new metrics, different tracking architecture, and specialized workflows.

Evolution Of Measurement

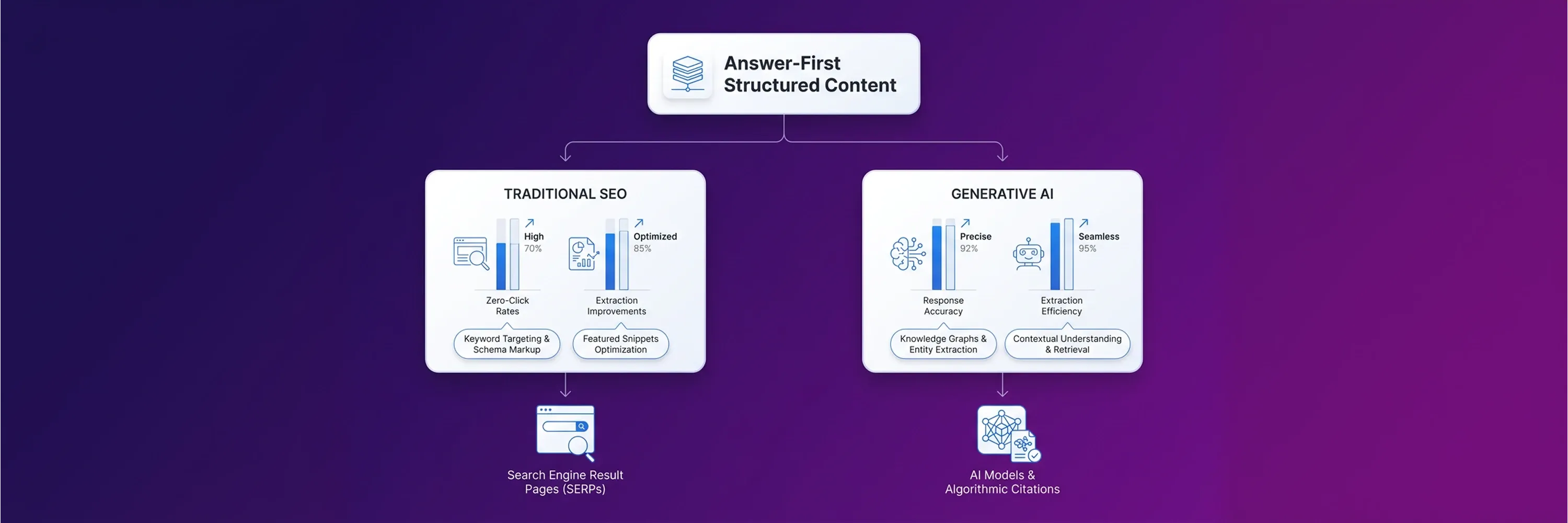

Because visibility measurement requires these new metrics, companies now measure visibility through Share of Model instead of traditional position metrics. Search engines construct answers from multiple sources. This shift means that a citation inside an Artificial Intelligence generated response represents the new standard of visibility. A traditional keyword rank tracking approach fails because it measures static positions on a Search Engine Results Page. Modern language models do not produce static pages. They synthesize answers dynamically based on conversational context.

As the landscape changed, the focus of the updated SEO ranking checker shifted towards retrieval frequency. Organizations need to know how often a language model recommends their brand. They track citations across different platforms to establish their market presence. In 2026, visibility requires retrieval rather than traditional ranking. This shift forces brands to look beyond top ten blue links. If a brand wants to optimize its landing pages for buyers, the company must answer specific user questions. A direct mention within an AI overview provides more value than the first organic position below generative text.

Tracker Accuracy Claims

As mentioned above, direct mentions within AI overviews provide significant value, but industry experts debate whether exact measurement precision remains feasible in modern search environments. Hourly SERP volatility makes perfect accuracy impossible for any tracking software. Language models operate as probabilistic systems, and they generate different responses to the same prompt. If a user prompts ChatGPT one hundred times, the model has a less than one percent chance of generating an identical list twice.

Legacy platforms fail because they assume deterministic rankings while modern systems monitor probabilistic outcomes. Traditional keyword rank tracking tools expect a single right answer for every query, but modern instruments require a different approach.

Companies observe the following metric patterns to measure their digital marketing return:

-

Tracking tools log the frequency of brand mentions across thousands of conversational sessions.

-

Analytics platforms calculate the percentage of total AI responses that cite a specific domain.

-

Monitoring systems record sentiment shifts in how language models describe products.

-

SERP monitoring dashboards capture the presence of generative overviews for targeted industry queries.

These reliable patterns provide a clear picture of market presence. Companies build accurate reporting structures when they stop chasing hourly position fluctuations and start analyzing long-term citation trends.

Platform-Specific Retrieval Logic

Businesses analyze long-term citation trends to build accurate reporting structures, but a single tracking tool cannot measure these trends effectively because different AI platforms prioritize different data sources. Each language model applies a unique set of rules to determine which information deserves a citation. Organizations waste resources when they apply one measurement standard across all generative engines. A strong strategy recognizes these differences and adapts the tracking architecture accordingly.

Engineers build these models with specific objectives that influence their retrieval logic. One model might prioritize conversational forums, and another model might favor official databases. This variance explains why a domain might dominate ChatGPT citations but remain invisible in Google's generative answers. Precise measurement requires companies to understand the specific signals that trigger citations within each distinct platform. If companies understand these underlying preferences, they can implement the right SEO best practices. Tracking tools must align with these distinct data diets to capture meaningful visibility metrics.

Why ChatGPT Bypasses Traditional Metrics

OpenAI's language model serves as a prime example of a distinct data diet because it prioritizes User-Generated Content over optimized marketing pages. ChatGPT values organic discussions because human conversations provide authentic context and varied perspectives. Brands struggle to measure their presence here if they rely on traditional metrics. The model ignores structured sales pages and pulls information from community forums instead.

This preference forces tracking software to monitor discussion threads rather than corporate domains. Social platform citation analysis reveals that ninety-nine percent of Reddit citations in ChatGPT point to unique discussion threads rather than brand profiles. Brands achieve a certain degree of visibility only when people discuss a product online. Tracking tools must scrape and analyze these external forum mentions to calculate a brand's Share of Model.

How Perplexity Values Recency

While ChatGPT relies on external forum mentions, Perplexity functions as an answer engine that favors fresh content and immediate answers. The platform prioritizes recent publications over established historical pages to provide users with the latest information. This logic requires distinct SERP monitoring capabilities compared to traditional search engines. Tracking tools must capture how quickly Perplexity indexes and cites new articles.

A strong tracking strategy accounts for this rapid content turnover. According to an analysis of freshness signals, Perplexity cites content older than one hundred eighty days at a thirty-seven percent rate versus ChatGPT's higher tolerance for older information. Because the engine discards outdated sources so quickly, brands cannot rely on legacy tracking metrics. Software needs to measure citation duration to show companies exactly how long their fresh content maintains its visibility before newer articles replace it.

How Gemini Prefers Official Data

Unlike Perplexity and its focus on fresh content, Google Gemini prefers official structural data and established domain authority when it generates its overviews. The system leans on verified entities, massive media properties, and Google's own ecosystem to construct reliable answers. It does not value independent community discussions the same way ChatGPT does. This structural preference explains why tracking across traditional media and video platforms matters for Gemini visibility.

Companies need exact measurement tools that track citations across these large media properties. Recent citation pattern research shows that YouTube serves as the most-cited domain in Google AI Overviews at eighteen percent. Gemini pulls timestamps and transcripts directly from video content to answer user queries. Effective tracking architecture must monitor these multimedia citations to capture a complete picture of a brand's performance within Google's generative ecosystem.

Select seo ranking checker for Software Stack

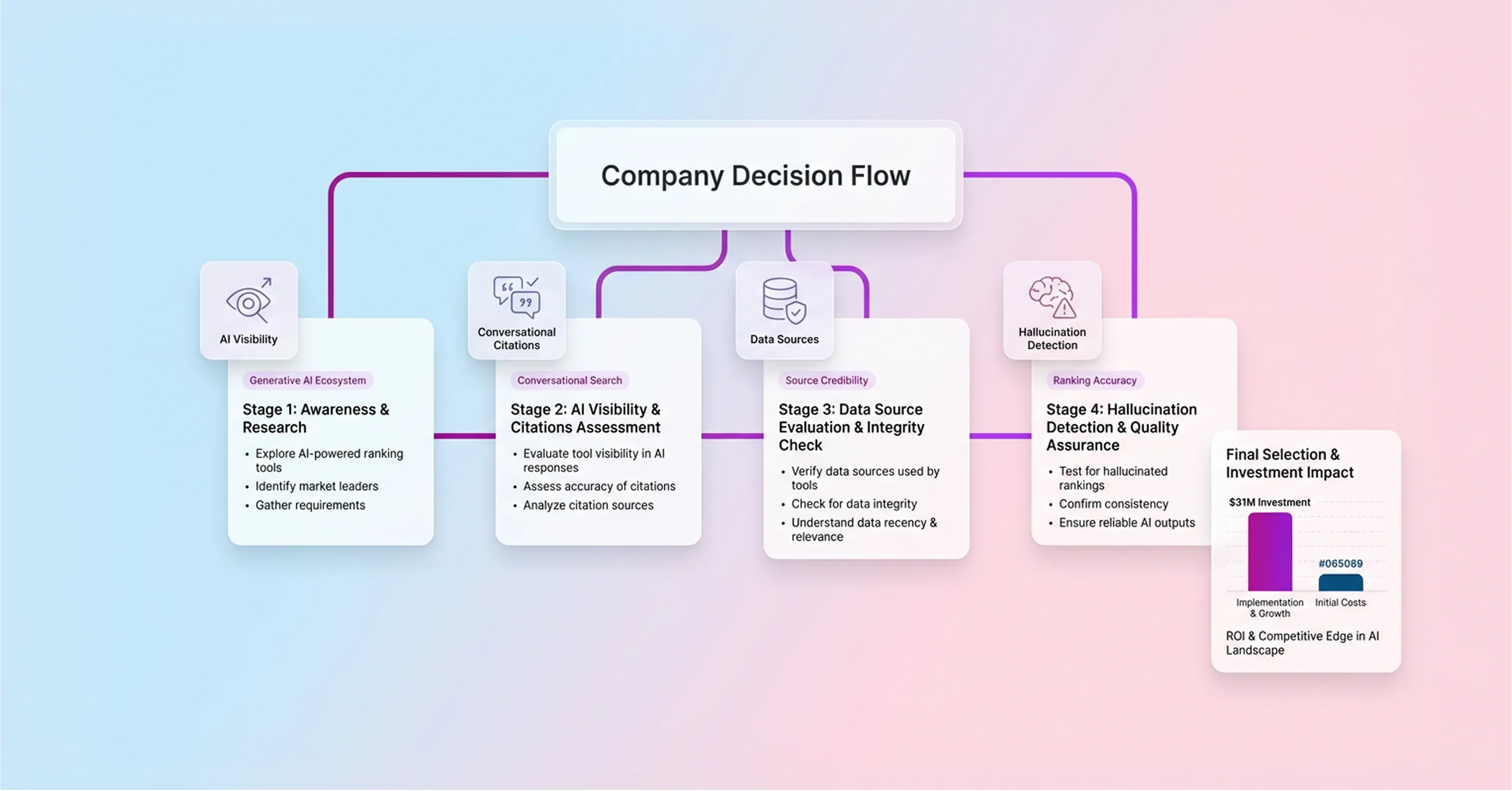

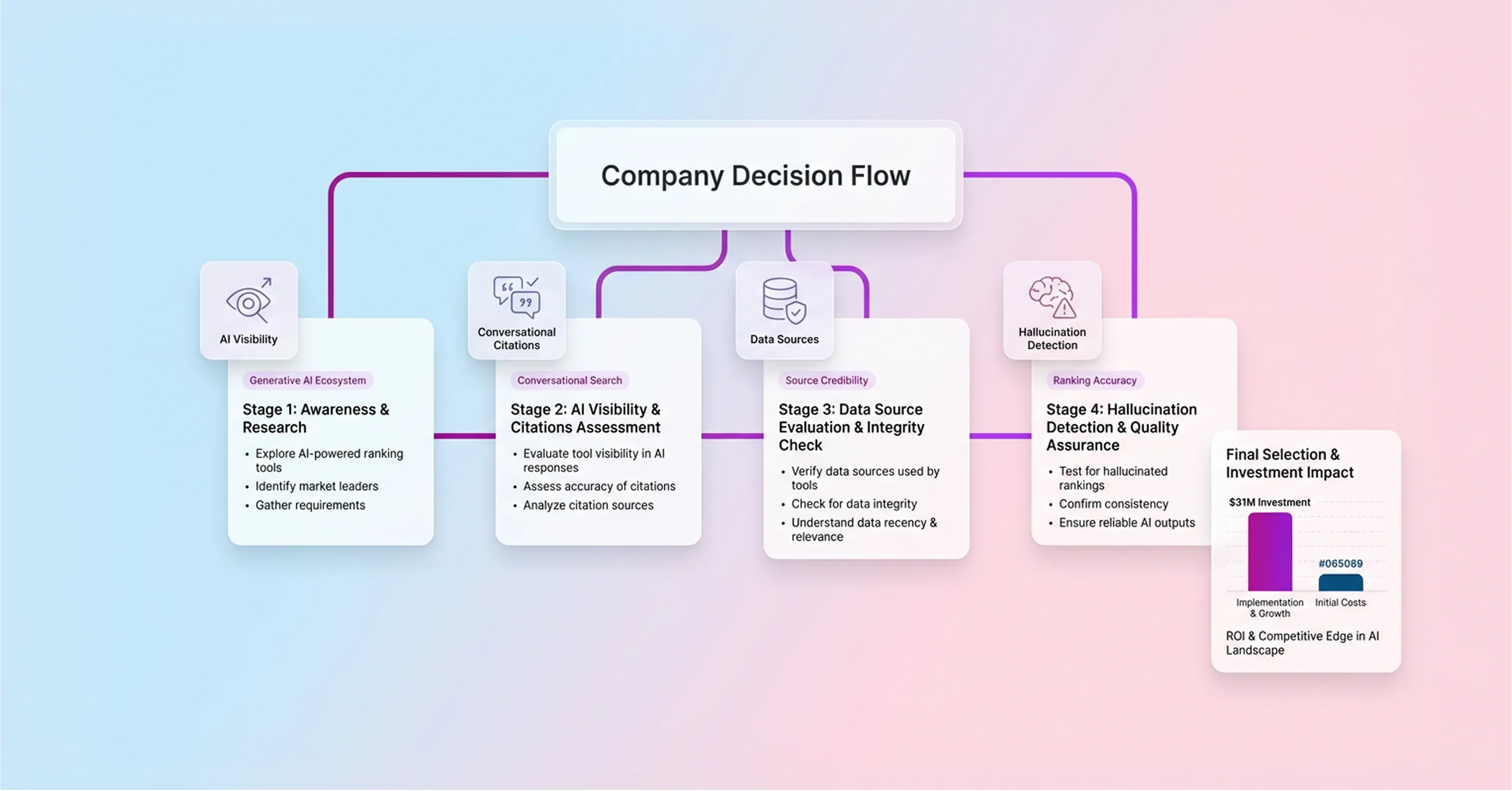

Because companies need a complete picture of their brand's performance across these different generative ecosystems, they evaluate different software options by matching tool capabilities with specific LLM search paradigms. Businesses use enterprise software suites for traditional SERP monitoring. But these organizations often struggle to capture dynamic conversational citations with legacy tools. In contrast, purpose-built AI visibility startups focus exclusively on monitoring language models and their unique retrieval patterns. Investors recognize this technological shift. Over the last two years, investors poured over $31 million into AI visibility tracking software, and this capital funded new infrastructure that parses probabilistic answers rather than static links.

Companies make software choices based on how buyers search for information. These buyers dictate the tool selection. If consumers rely heavily on ChatGPT for product research, businesses need tools that scrape user-generated content and conversational threads. Legacy systems process structured data well. However, legacy Search Engine Optimization (SEO) trackers fail to detect hallucinations when ChatGPT invents pricing or features because they do not monitor generative text outputs. Without this capability, brands risk losing trustworthy relationships with customers who read inaccurate AI-generated information.

Organizations select a tracking stack by analyzing the specific data sources each platform uses to generate reports. Developers prioritize content recency in some software solutions. They program other tools to weigh historical domain authority more heavily. Business leaders evaluate these technical differences to ensure their chosen tool captures the right metrics for their specific industry. A business comparing different platforms must prioritize software that aligns with the specific generative engines its consumers prefer. If the tool matches the retrieval logic of the preferred AI engine, then the resulting data provides actionable insights for future campaigns.

Create Unified Workflow

After organizations select tools to gather actionable insights, companies prevent metric fragmentation across different analytics dashboards by combining legacy metrics with new citation data. A standard approach to measurement requires integrating traditional keyword rank tracking with modern AI visibility insights. Organizations achieve this integration by establishing clear reporting structures that capture both static link positions and dynamic generative mentions. Because the industry recognizes this dual necessity, 55% of surveyed companies have Generative Engine Optimization (GEO) budget allocations within their 2026 budgets. This financial commitment enables businesses to use an SEO checking tool alongside their existing traditional analytics platforms, ensuring that historical data is not lost.

Businesses follow a systematic process to merge these different data streams effectively:

-

Teams review existing performance dashboards to identify gaps in generative engine tracking.

-

Organizations allocate specific financial resources for new visibility software, as Forrester recommends dedicating 15% of the content budget to AI search visibility.

-

Companies integrate citation frequency metrics with legacy organic traffic data to map overall market presence.

-

Brands adjust content strategies based on which language models retrieve the company most frequently.

Professionals improve campaign performance by translating this unified data into optimization strategies. When teams analyze combined metrics, they identify which content formats trigger citations in specific language models. This complete view helps organizations prepare for future performance marketing in AI ecosystems without abandoning their foundational search traffic. A unified workflow helps departments accurately measure their true digital footprint across all modern search interfaces.

Conclusion

To summarize, organizations measure their true digital footprint and build an effective visibility strategy when they select platforms that align with target audiences' specific AI search behaviors instead of a single perfect tool. Companies achieve better results when they implement tracking solutions that capture both traditional metrics and generative citations. Accurate model share measurement helps organizations translate complex retrieval data into clear optimization strategies.

Auditing current brand citations across major language models establishes a solid baseline for future market developments. A reliable seo ranking checker captures these early insights. Exploring the guide on performance KPIs for AI search helps translate these visibility metrics directly into revenue and adjust reporting frameworks accordingly.