Introduction

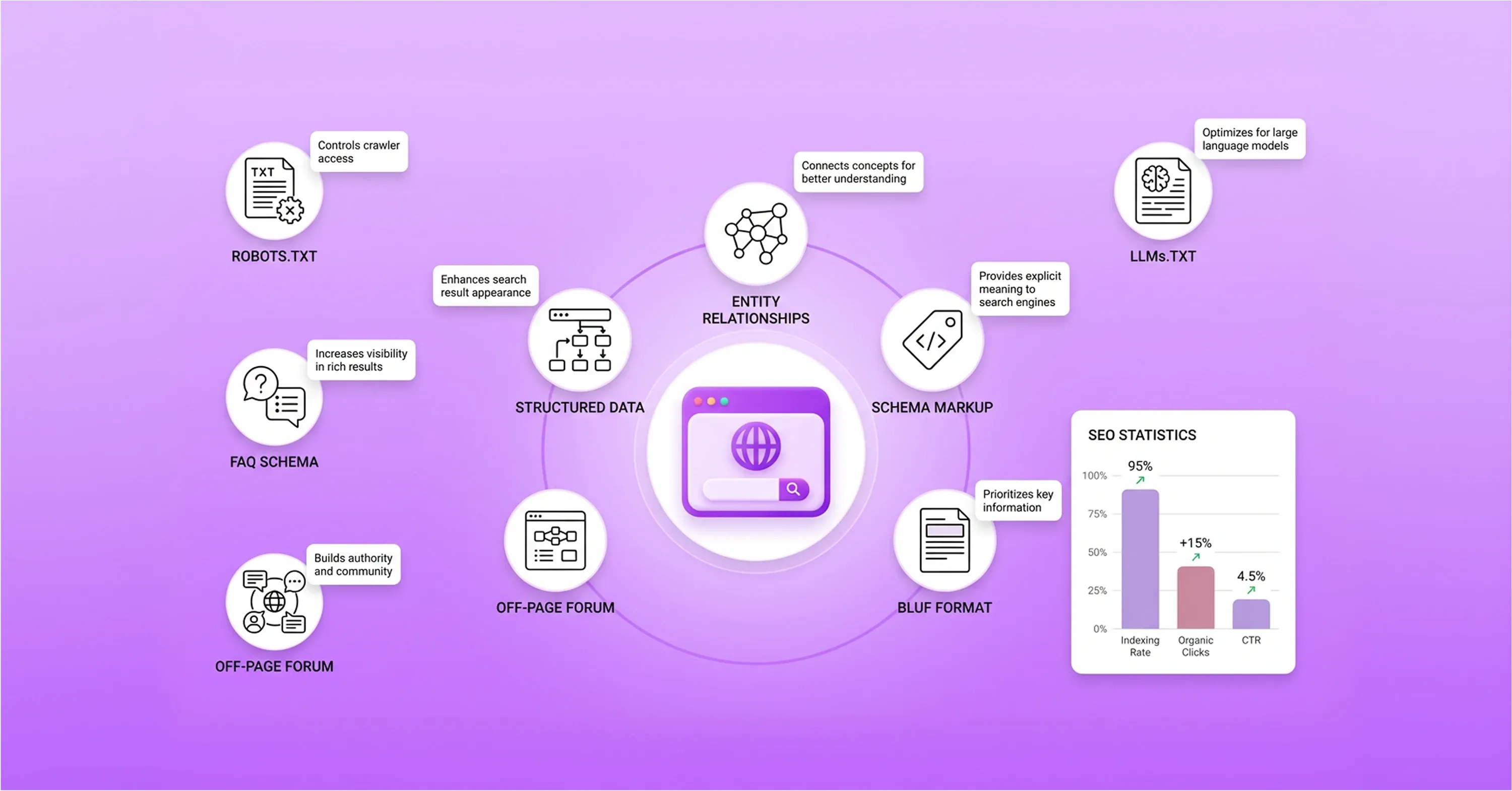

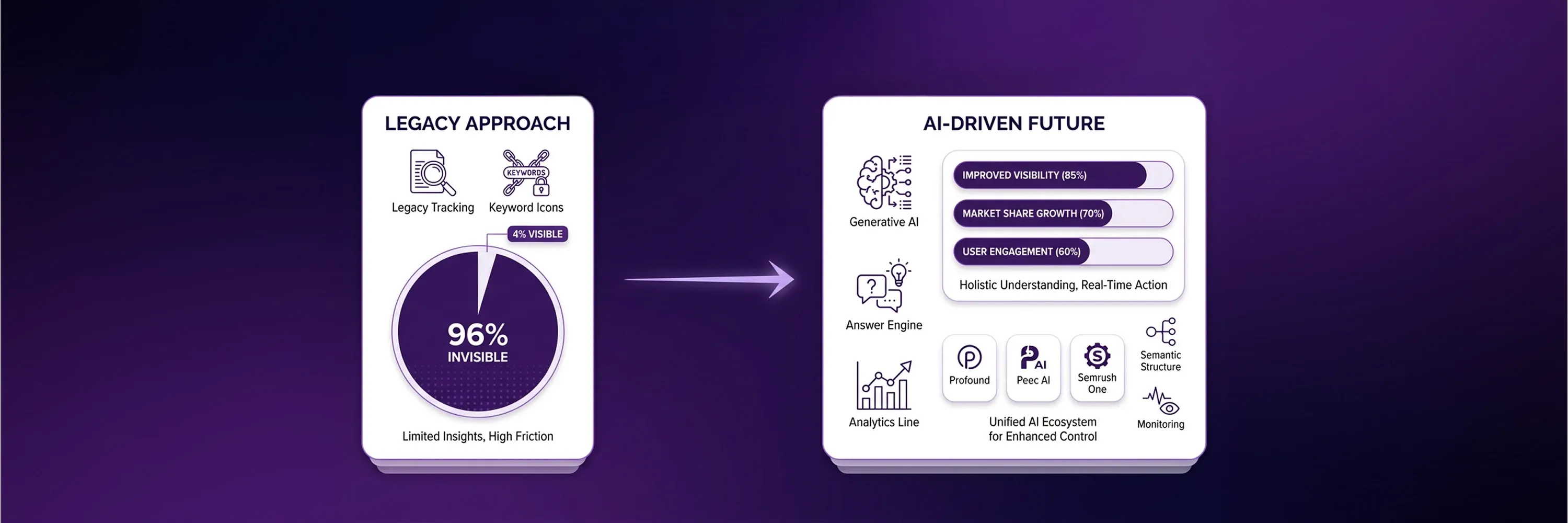

The digital search landscape is undergoing a shift toward zero-click environments. Tools like ChatGPT and Google’s AI Overviews generate answers directly on the results page. Traditional search engine optimization relied on keyword density and backlink volume to rank web pages. Today, large language models prioritize machine-readable structure, entity signals, and robust data relationships. Consequently, a perfect technical score from a standard web page SEO checker means little if the underlying site architecture lacks the specific signals that generative engines require.

This change makes conventional ranking metrics insufficient for modern visibility. Recent behavioral data indicates that 58.5% of US searches and 59.7% of EU searches end without clicks to websites, keeping users entirely within the AI-generated interface. This new reality requires specialized technical evaluations that look beyond human-readable content. The following sections outline the methodology for evaluating website architecture, configuring crawler accessibility, and adapting traditional audit workflows to meet the demands of Generative Engine Optimization (GEO).

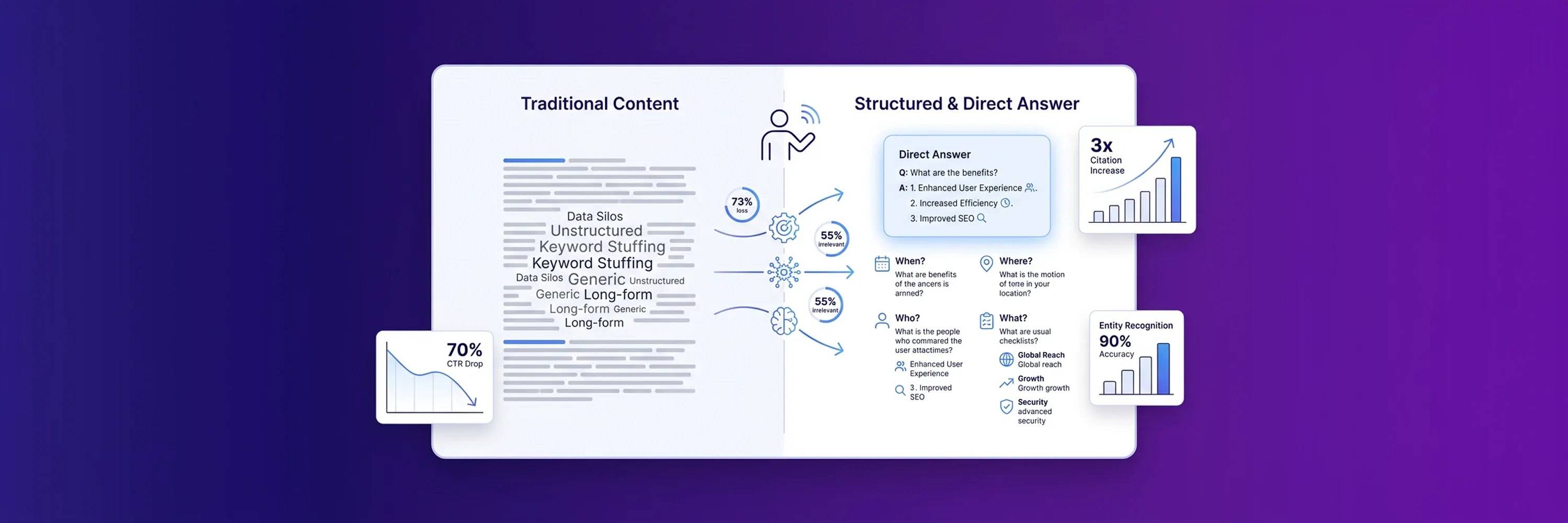

Divergence Between Traditional Scores and AI Citation Readiness

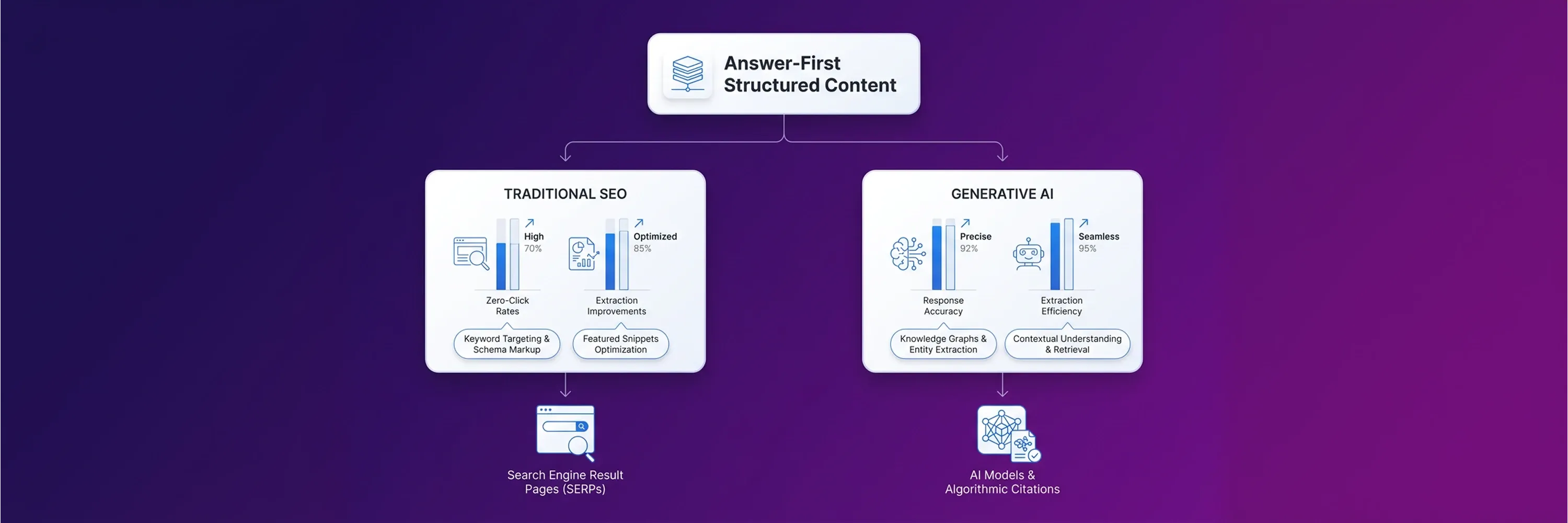

Traditional audit workflows rely on SEO tools that measure keyword density, backlink profiles, and page load speed to calculate a technical score. However, even a 100/100 score on these platforms does not guarantee visibility in modern artificial intelligence (AI) engines. AI systems do not evaluate pages the way legacy search algorithms do. Generative models extract entities, relationships, and facts to synthesize answers rather than simply matching keywords to user queries.

Because of this shift, Tech SEO Connect 2025 found that traditional SEO metrics predict only 4–7% of AI citations. This gap highlights why legacy auditing methods lack the precision required in the current search landscape. Large language models (LLMs) struggle to understand the context of web content without structured data and explicit entity connections. Consequently, a recent GEOReport audit analysis shows that more than 80% of indexed pages fail to appear in generative reasoning processes.

Website publishers can no longer rely on keyword matching alone. Earning AI citations requires building content architecture around fact density rather than search volume. When audits focus on machine-readable structures, brands gain the ability to compete in zero-click environments. Engineers are rebuilding their evaluation criteria to prioritize fact extraction over simple term frequency.

Technical Auditing Guidelines for AI Bots

After engineers rebuild these evaluation criteria, system administrators establish the foundation for technical readiness by configuring site accessibility for AI crawlers. Search platforms deploy specialized bots to scrape content for training and real-time retrieval. If these bots cannot access the website architecture, even the best entity relationships remain invisible to generative engines.

A modern technical SEO audit prioritizes crawler configurations that grant explicit permission to these new user agents. Engineers evaluate server logs precisely because a single misconfigured directive can block major AI platforms from reading the site. Webmasters verify that the website infrastructure supports the distinct fetching patterns of machine-learning models.

Traditional crawlers prioritize human-readable Hypertext Markup Language (HTML), but AI bots look for machine-readable text formats and structured data payloads. Website administrators who integrate these accessibility checks into routine website evaluations strengthen their overall optimization strategy. They also prevent unexpected drops in organic visibility by identifying and resolving blockages early.

Robots.txt for LLM Accessibility

Website auditors identify these blockages by evaluating specific user agents in the robots.txt file to ensure AI crawlers can access the site properly. Many websites inadvertently block bots like GPTBot or Google-Extended because developers apply blanket restrictions to unfamiliar agents. A thorough on-page analysis requires checking these specific directives to confirm that generative engines have permission to access the content. Webmasters distinguish between scraping bots that steal content and search bots that generate citations. Allowing access to legitimate AI crawlers helps ensure that the underlying LLMs can ingest the website’s facts and entities. These robots.txt corrections serve as the first step in adapting site architecture for generative search visibility.

llms.txt Standard

While robots.txt corrections help adapt site architecture, the emerging llms.txt standard provides machine-readable text files directly to LLMs. This file functions similarly to robots.txt, but it explicitly formats content and site structure for generative engines. The llms.txt file strips away heavy design elements and presents core information in a clean Markdown format. AI bots process this standardized format much faster than traditional HTML pages. This streamlined file helps search engines establish the accuracy of the website’s entity relationships and factual claims without parsing complex code. Early adopters who deploy the llms.txt standard create a direct pipeline to generative models, giving their content an advantage in citation selection.

JavaScript Rendering Issues

Even with a direct pipeline to generative models, AI bots require properly rendered client-side content to avoid indexing failures. Many modern websites rely heavily on JavaScript to display critical information, but generative engine crawlers often struggle to execute these scripts efficiently. If an AI bot abandons the rendering process before the script loads, the content remains invisible to the LLM. Website auditors include rendering tests in every technical SEO audit to simulate how generative bots view the page. Developers who implement server-side rendering or dynamic rendering provide evidence that the content exists and remains accessible to all fetching agents. Resolving these JavaScript dependencies ensures that AI models can extract the necessary semantic signals without wasting excessive crawl budget on complex code execution.

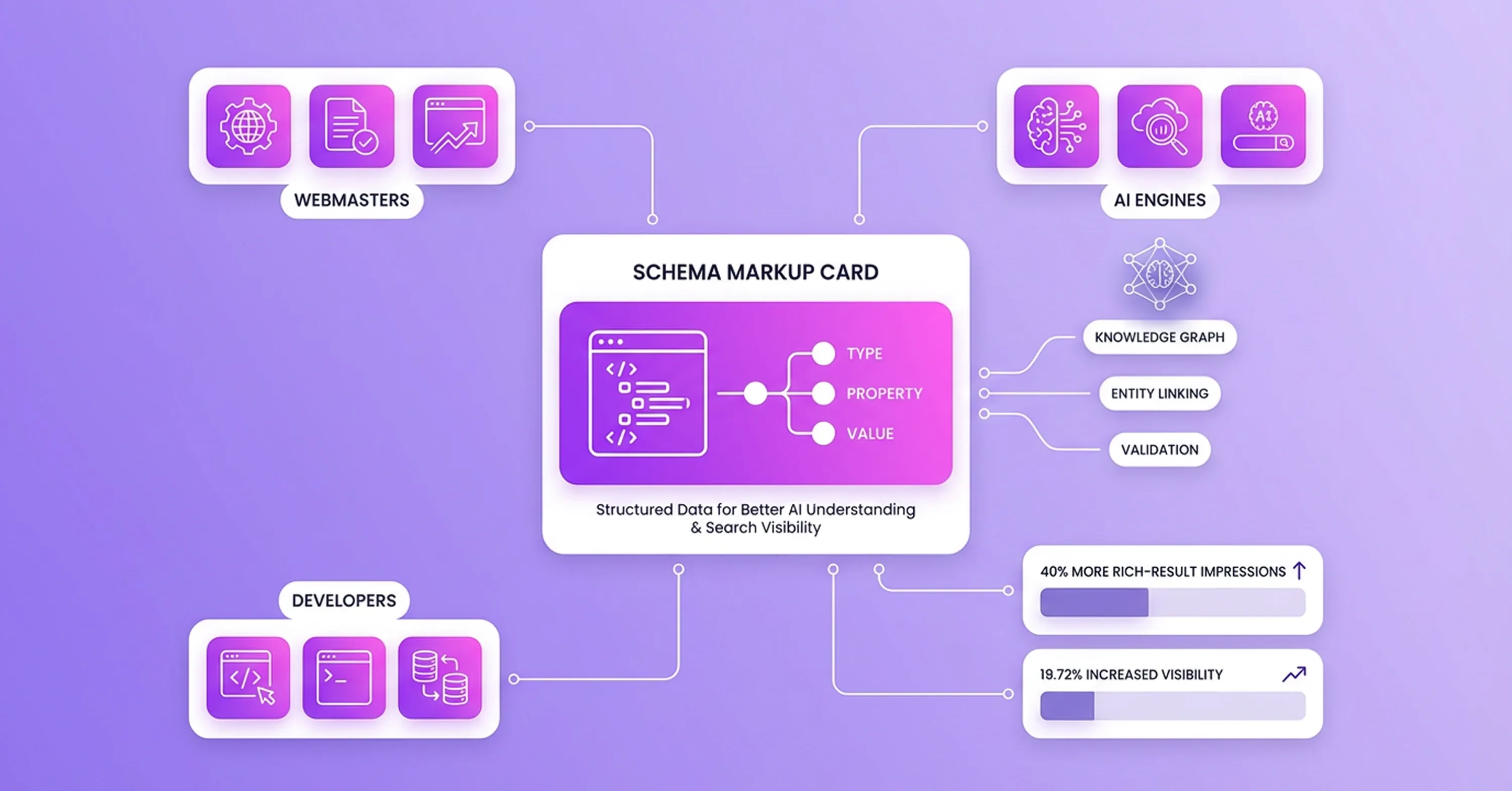

Semantic Triples with Web Page SEO Checker

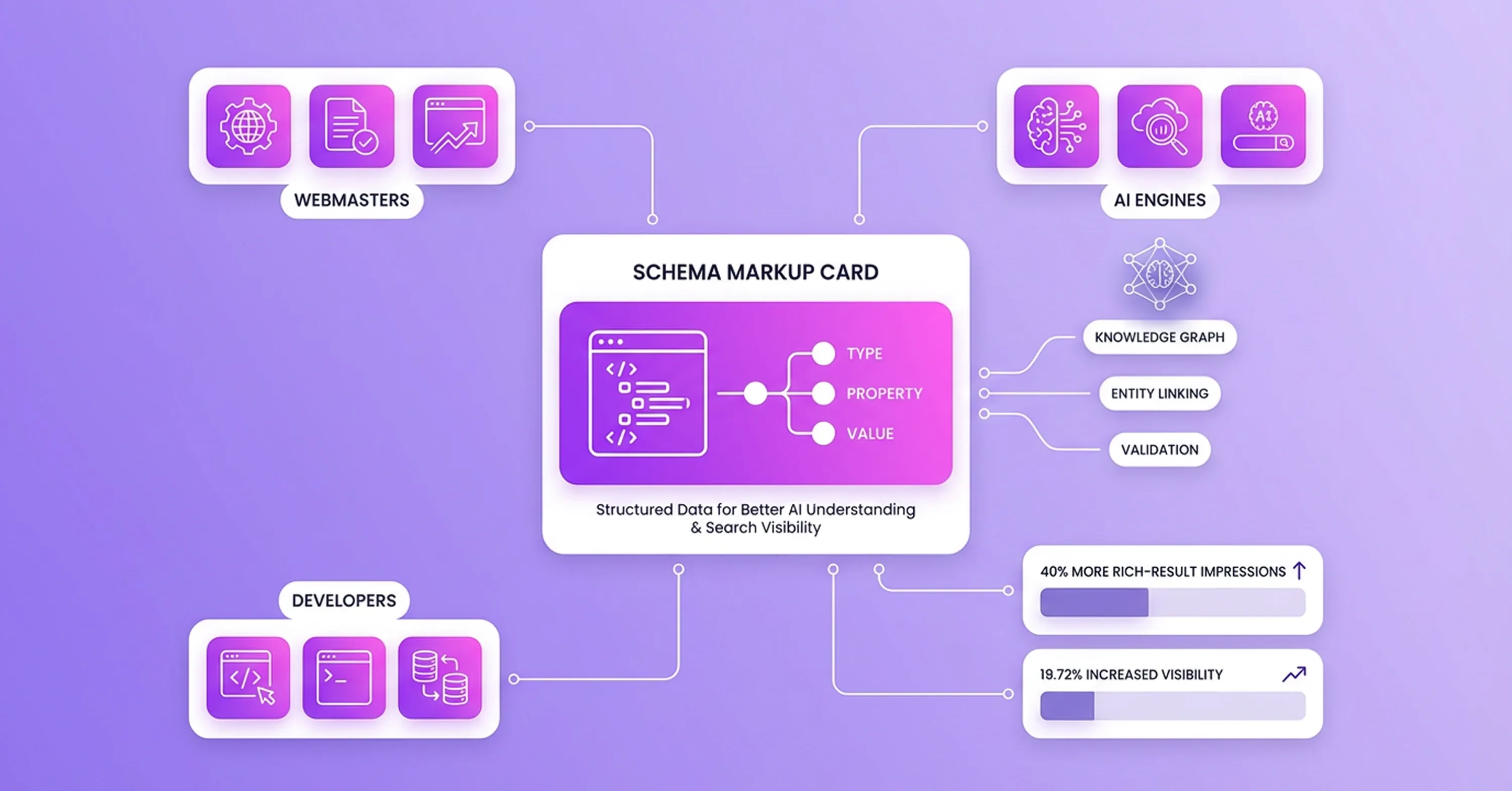

Once developers resolve these JavaScript dependencies and expose the necessary semantic signals, schema markup provides the machine-readable structure that generative engines need to understand semantic triples. Semantic triples consist of a subject, predicate, and object, and they form the foundation of how AI models map relationships between entities. Webmasters evaluate these structured data signals when auditing a website’s Knowledge Graph anchors. A structured data validation tool can serve as a web page SEO checker by identifying missing schema properties and validating existing code against current search engine guidelines.

Comprehensive schema markup directly influences how often LLMs cite a website. For example, a 2023 Milestone Research study found that pages with schema markup earn 40% more rich-result impressions. A Schema App case study also showed that entity linking implementation increased visibility by 19.72%.

During the evaluation process, developers verify the accuracy and validity of several specific markup types:

-

Product: Details about price, availability, and reviews help AI engines answer transactional queries.

-

Frequently Asked Questions (FAQ): Questions and answers provide high-density facts that generative models extract for direct responses.

-

Organization: Corporate details establish the brand as a recognized entity within the overarching Knowledge Graph.

-

LocalBusiness: Geographic and operational data anchor the brand to specific physical locations.

These validated markup categories ensure that the website communicates its entity relationships clearly to machine-learning algorithms.

Content Architecture For Fact Density Adjustments

After developers use markup categories to communicate entity relationships clearly, organizations shift their content architecture from keyword density to fact density to secure AI citations. Legacy algorithms counted keywords, but generative models extract dense clusters of factual information. An effective on-page analysis reveals whether the text contains enough explicit entities for an AI bot to trust it. Kevin Indig’s citation study found that heavily cited text contains 20.6% entity density, while normal text contains only five to eight percent. Publishers build this necessary density by structuring content with the Bottom Line Up Front (BLUF) format. This three-layer format places the main answer at the beginning of the article, follows it with supporting evidence, and concludes with granular details.

AI bots read pages from top to bottom and allocate limited processing power to the introduction. Analysis of 1.2 million search results confirms that 44.2% of ChatGPT citations come from the first 30% of the content. When a web page SEO checker confirms that the primary facts appear at the top of the document, organizations gain confidence that generative models will find their core message. This structured approach helps publishers avoid outdated keyword stuffing. Organizations that deploy these search optimization strategies turn long paragraphs into concise, machine-readable answers.

Content Value Through Structured Formats

These machine-readable answers rely on structured content blocks that improve how efficiently machine-learning algorithms process and extract information. Generative models look for specific formatting patterns to identify relationships between concepts. An initial on-page analysis often shows that dense paragraphs do not clearly present valuable answers to these bots. Publishers create clarity by breaking down complex ideas into organized formats.

Frequently Asked Questions (FAQ) blocks serve as an effective structure for AI extraction because they mirror the exact question-and-answer format that language models use. Recent research from Frase shows that FAQ schema earns the highest AI citation rate among all available schema types. A standard web page SEO checker helps website owners identify missing markup opportunities within these text blocks.

Developers prepare FAQ sections for generative engines by following a specific implementation sequence:

-

They extract the most common user questions from previous content analysis reports.

-

They draft concise answers that include relevant entities.

-

They wrap the question and answer pairs in proper schema code.

-

They test the implemented code with a validation tool to verify the formatting.

This sequence ensures that language models recognize the structured data and serve the answers to users.

Measurement Workflows For Off-Page Trust Signals

While structured data helps serve answers to users, modern search visibility also requires tracking off-page trust signals and adopting new performance metrics. Legacy auditing focused exclusively on a brand’s own domain, but generative engines build knowledge and synthesize information across the entire internet. AI models look for external validation on community platforms to confirm factual claims. Brands map their presence on forums like Reddit and Quora to understand how these models perceive their authority. A recent Amsive Digital analysis revealed that Perplexity cites Reddit in 46.7% of its responses, making it the most referenced platform. A traditional web page SEO checker cannot measure these external signals, so organizations incorporate social listening tools into their regular workflow.

Organizations also change how they measure success to account for off-page mentions. Traditional search engines provided static ranking positions, but AI systems produce probabilistic answers that vary based on user context. Because deterministic rankings no longer exist, content analysis and technical SEO audits alone cannot provide the full picture. The industry has shifted toward measuring Share of Model (SoM). This metric calculates how often a brand appears in AI-generated answers for specific topic clusters. Brands establish a more reliable view of performance when they track this metric alongside traditional data. Teams that implement these updated measurement frameworks understand exactly how generative engines perceive and cite their content.

Conclusion

Because teams understand how generative engines perceive and cite their content, they recognize that search platforms have evolved into answer engines, fundamentally changing how technical audits evaluate website performance. Although traditional metrics remain useful, modern audit workflows require web page checkers to identify machine-readable structures rather than rely solely on keyword-rich text. Websites that fail to adapt to these new parameters risk a rapid decline in organic visibility. As AI models continue to process user interactions, prioritizing entity relationships and fact density provides a clear advantage. Website owners and developers respond to these changes by evaluating schema markup and crawler accessibility. The next step involves implementing necessary SEO optimization measures to ensure that a brand remains visible in the AI citation landscape of tomorrow.