Introduction

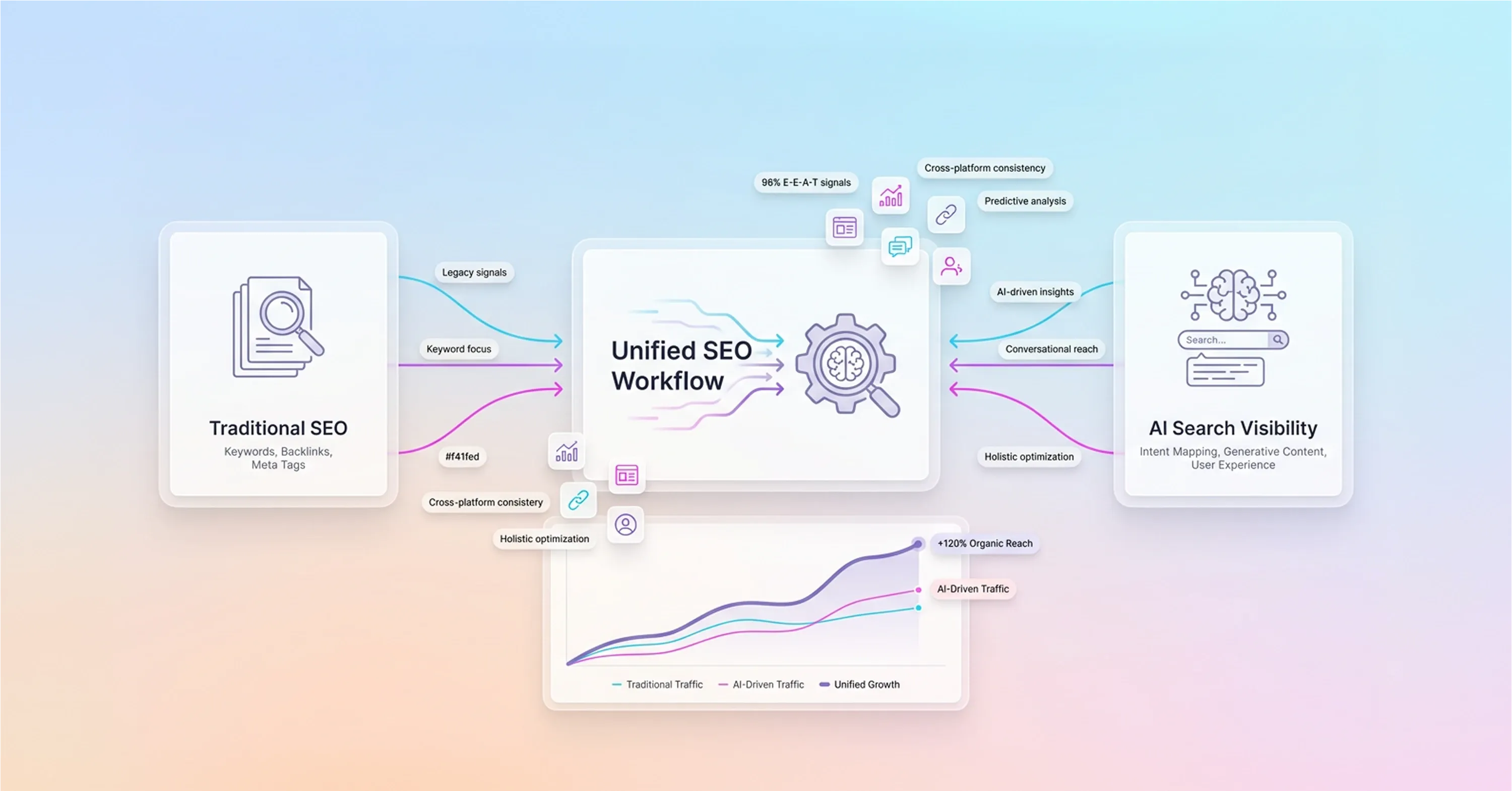

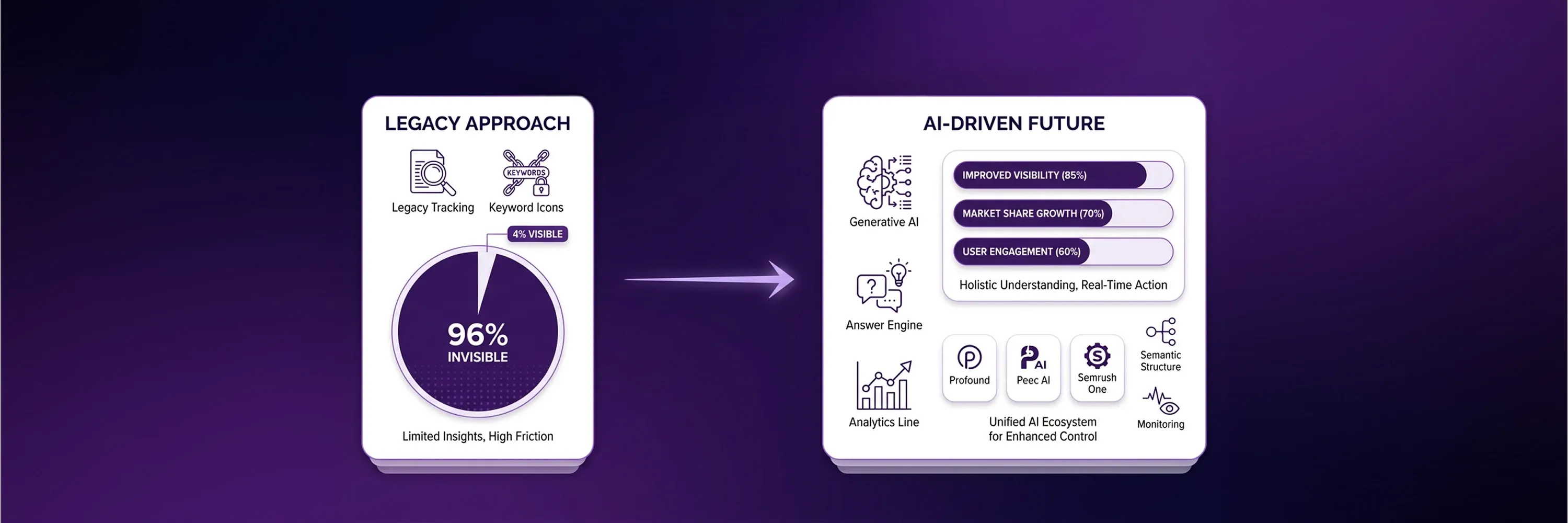

Search engine optimization is the foundation of digital visibility. For more than two decades, optimization has satisfied human intent through traditional search algorithms that delivered lists of links. Today, artificial intelligence has split search behavior into two distinct tracks: conventional blue-link exploration and generative engine extraction. However, many organizations treat these tracks as separate initiatives and build isolated teams and redundant workflows. Fragmented data, unclear ownership, and weak collaboration destroy optimization strategies. Organizations waste resources and create strategic paralysis when they separate traditional search and AI optimization. A unified strategy for SEO helps brands maintain sustained visibility across all interfaces and avoid duplicated operational efforts. Organizations can restructure content creation and measurement to satisfy both conventional search systems and large language models simultaneously. In this article, we explore how to build a dual-engine approach that secures a comprehensive digital presence.

Dual-Search Paradigm Cost Of Isolation

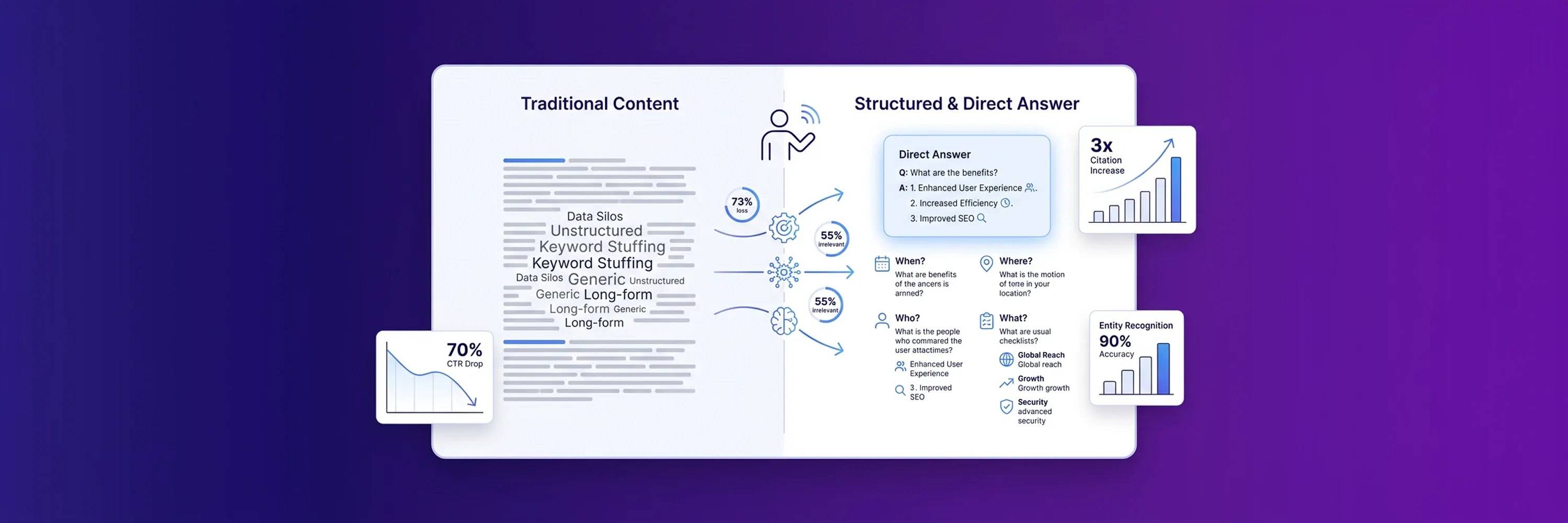

Organizations must understand the cost of isolation before they build this dual-engine approach. When organizations isolate traditional search efforts from generative engine optimization, they create strategic paralysis and waste valuable company resources. Many companies build separate teams to handle conventional rankings and new AI interfaces. This division creates a gap between how systems index content and how organizations produce it. A unified architecture bridges this implementation gap by aligning traditional algorithms with large language models. When organizations combine their efforts, they build a single, systematic workflow that satisfies both systems simultaneously. This approach forms the foundation of a modern strategy for SEO.

Search engines increasingly blend traditional results with generative answers. A BrightEdge study found that AI Overview citations grew from 32% to 54.5% overlap with organic rankings over 16 months. Because these systems share so much ground, separate initiatives duplicate organizational efforts. A comprehensive SEO roadmap must account for this growing overlap to prevent wasted resources. Companies that maintain isolated teams often publish redundant content that competes against itself in search results. Organizations should instead focus on a single pipeline that optimizes for both text extraction and traditional indexing. For example, traditional Google search engine optimization practices still provide the structural foundation for generative visibility. Aligned initiatives reduce operational costs and improve overall brand reach across digital platforms.

Content Architecture For Dual Visibility

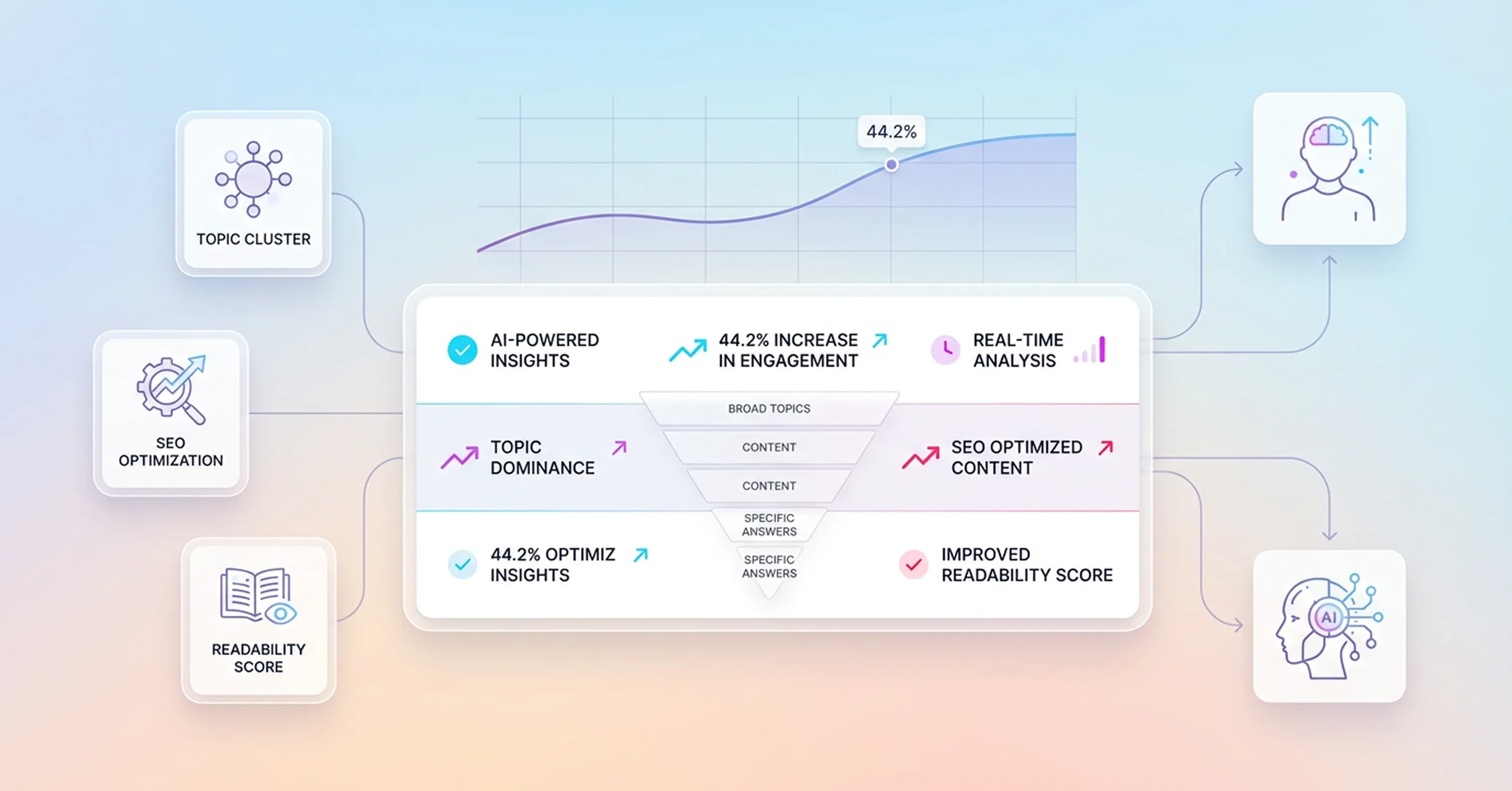

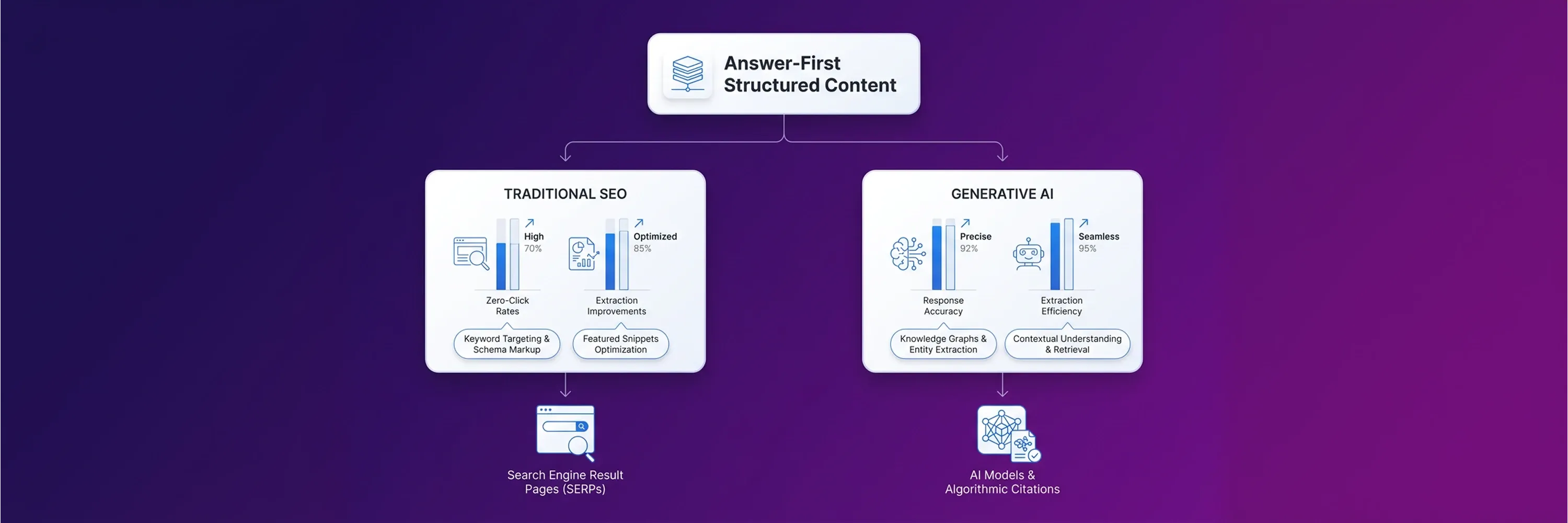

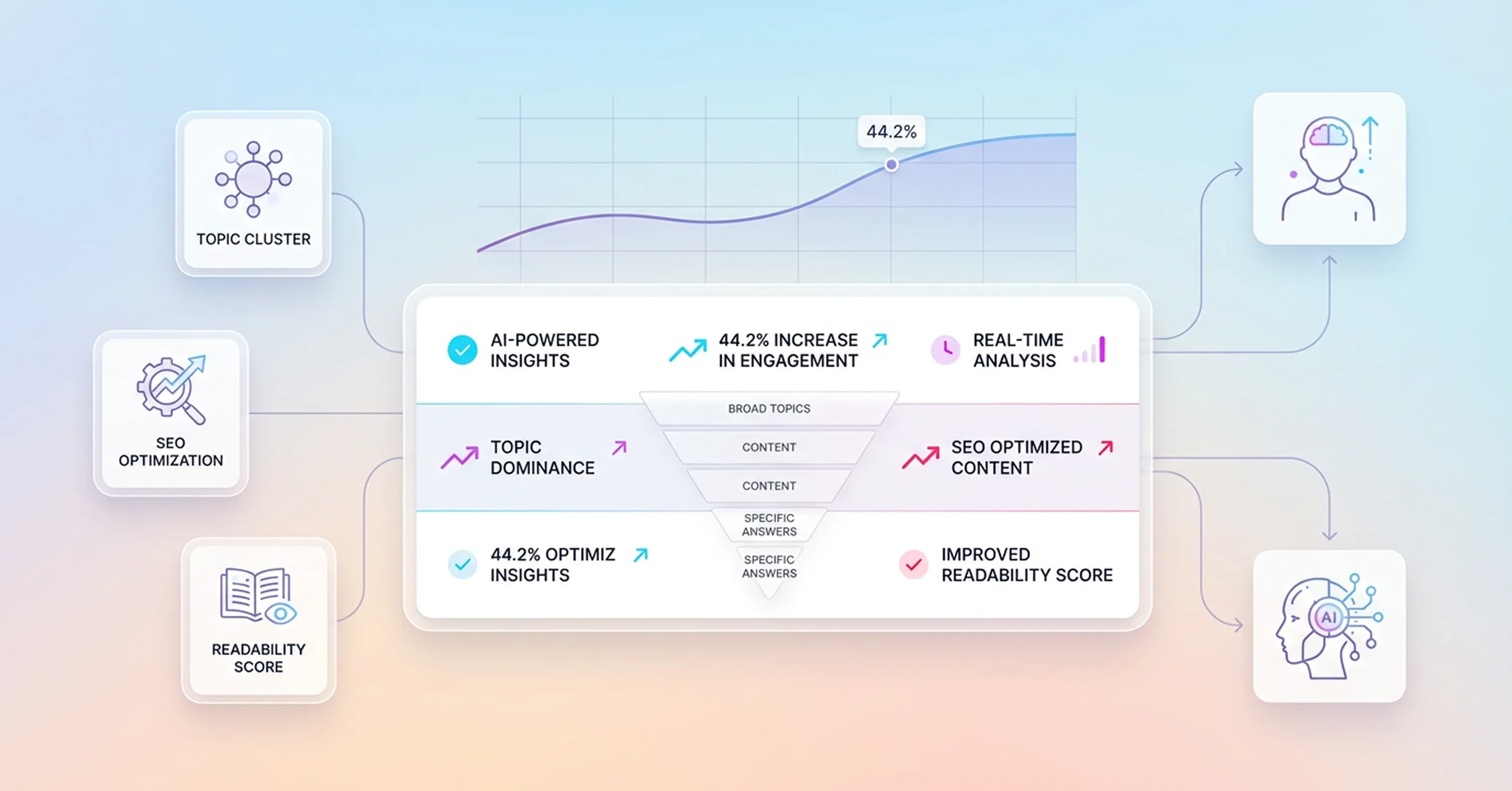

Organizations improve brand reach across digital platforms when they build a specific content architecture. Within this architecture, restructured topic clusters satisfy both human skimming and machine chunk-level extraction. Properly formatted text serves two distinct algorithmic needs while maintaining the reading experience. A methodical approach ensures that paragraphs flow naturally for readers and remain highly accessible to artificial intelligence systems. If writers do not organize information logically, generative models cannot process the core message easily.

Structured content bridges the gap between traditional indexing and machine extraction. Organizations implement several formatting techniques to achieve this dual visibility:

-

Short paragraphs with clear subjects and action verbs

-

The most important conclusions at the beginning of the article

-

Descriptive subheadings that define the exact topic of each section

Search Engine Land reports that 44.2% of ChatGPT citations come from the first 30% of content. This data shows the importance of front-loading information. Generative engines prioritize early content access to formulate their answers quickly. When organizations place critical definitions near the top of the page, they increase the likelihood of appearing in AI search results. This placement also helps human readers who want immediate answers before reading the rest of the text.

SEO Planning With Atomic Facts

As mentioned above, article authors not only help readers but also improve machine extraction when they write standalone atomic facts.

Generative models look for concise statements that they can easily pull and present to users. This extraction process relies on clear definitions rather than long explanations. When writers isolate specific facts, they feed information directly into LLMs.

Search Engine Land reports that highly cited content uses definitive language like "X is" twice as often as vague framing. This finding proves that algorithms prefer direct answers. Direct articles avoid ambiguous language and feature concrete claims. Short, factual sentences give artificial intelligence systems exactly what they need to build comprehensive answers. This direct writing style removes friction and improves visibility across search interfaces.

Information For LLM Extraction

Writers also improve visibility in modern search systems when they place critical information in the top third of pages. Algorithms prioritize early content access when they scan documents for answers. If writers bury important definitions at the bottom of an article, large language models will likely ignore them.

An organized page structure puts the core message in the introduction. Writers state their main claims immediately before providing detailed reasoning or evidence. This structural hierarchy mirrors how human readers scan articles for relevance. When a page starts with a strong summary, the extraction engine can quickly verify the value of the content. Organizations that adopt this inverted pyramid structure see higher citation rates because they reduce the computational effort required to understand their pages.

Traditional Readability

Even though the inverted pyramid structure reduces computational effort for algorithms, this machine optimization should never compromise user engagement or narrative flow. While artificial intelligence prefers short facts, human readers still need context and storytelling to understand complex topics. Writers face the challenge of blending these two requirements into a single piece of content.

A coordinated approach allows organizations to maintain a natural narrative flow while supporting algorithmic structures. Writers use formatting tools to highlight facts and preserve the reading experience. Bullet points and bold text help algorithms identify key information, while transitional sentences guide human readers through the broader argument. If content feels robotic or disjointed, human visitors will leave the page quickly. High bounce rates signal low quality to traditional search algorithms. Therefore, writing for humans remains the ultimate safeguard for long-term digital visibility.

Platform-Specific Citation Logic Integration

Organizations build on this long-term digital visibility when they balance varying ranking signals across different platforms. Every search system uses its own logic to evaluate and rank content. Google continues to rely on traditional backlinks and user experience metrics, while Perplexity prioritizes niche community discussions. Meanwhile, an AI search engine like ChatGPT favors authoritative media outlets and established encyclopedias.

A successful strategy for SEO requires a method for satisfying these diverse citation preferences within a single approach. Magazine Manager reports that citation consolidation favors Reddit, Wikipedia, and TechRadar for one out of every five citations. This data proves that LLMs trust established community consensus and credentialed media over standard corporate blogs. Companies cannot afford to create separate content for each platform.

Instead, companies should rely on a unified framework that addresses all these signals simultaneously. This integration happens when organizations blend expert quotes, structured data, and community perspectives into their core content. For instance, when a writer cites a Reddit discussion within a well-researched article, that action adds the community validation generative engines seek. At the same time, proper schema markup ensures that traditional algorithms can categorize the page correctly. A strong SEO roadmap plans for this cross-platform compatibility from the very beginning. Organizations build a resilient digital presence when they satisfy multiple algorithmic preferences at once.

Unified SEO Strategy For E-E-A-T

A resilient digital presence also requires strong digital authority, and teams build this authority when they project expertise uniformly across the entire digital landscape. Search algorithms and generative models both prioritize content from trusted creators and recognized industry leaders. When organizations isolate their authority-building efforts, they waste resources and create inconsistent brand messaging. Effective SEO planning aligns digital public relations with authentic forum participation to build trust across all platforms. This alignment ensures that AI content marketing initiatives perform well in both traditional rankings and machine-generated answers.

According to Wellows, generative engines now use Experience, Expertise, Authoritativeness, and Trustworthiness signals as mandatory filtering criteria, and these signals appear in 96% of AI Overview citations. Because search engines rely heavily on these trust signals, teams should integrate them directly into daily operations. A comprehensive SEO roadmap guides teams in building this authority systematically and prevents it from becoming an afterthought.

-

Organizations participate in niche industry forums to answer complex user questions directly.

-

Companies publish original research that authoritative media outlets want to reference.

-

Teams feature subject matter experts in all technical articles to establish credibility.

-

Departments distribute press releases that highlight real organizational achievements.

When organizations combine these authority-building tactics, they create a unified strategy that satisfies both human readers and extraction algorithms.

Trust Through Interconnected Knowledge Graphs

Organizations support this unified strategy technically when developers use nested schema markup to create a systematic network of data that extraction engines can easily understand. Large language models struggle to interpret unstructured text, so they rely heavily on clearly defined entity relationships. When developers use schema markup, they translate human-readable authority into machine-readable formats. This translation helps algorithms connect specific authors, organizations, and concepts into a detailed knowledge graph. Proper SEO planning helps teams embed this structured data across digital assets to establish clear entity authority.

According to Am I Cited, pages with proper schema markup are three times more likely to receive citations from artificial intelligence systems than unmarked content. Because generative models need to verify facts quickly, they prioritize pages that present information in standardized formats. A unified strategy for SEO relies on this technical foundation to bridge the gap between traditional indexing and modern extraction. If companies ignore structured data, they force algorithms to guess the context of their articles, and this guesswork often leads to lower visibility.

Effective SEO optimization turns isolated web pages into an interconnected data network. Organizations can no longer rely on text alone to demonstrate their expertise. They must weave their credentials, authors, and primary topics into code that search engines can instantly digest, clearly connecting these data points to secure their position as authoritative entities for both traditional algorithms and generative models.

Hybrid Measurement Dashboard For Iteration

Organizations measure how effectively they secure their position as authoritative entities through modern search optimization. This optimization requires an analytical approach that tracks both traditional website traffic and machine-generated citations. For many years, data analysts measured success exclusively through organic clicks and keyword rankings. However, this click-only measurement model fails to capture how users interact with generative answers. When users get their questions answered directly on the search results page, they do not always click through to the source website. A comprehensive SEO roadmap includes hybrid measurement dashboards that monitor traditional traffic alongside citation frequency.

According to ZipTie, traditional metrics explain only 17% of citation variance in artificial intelligence systems, which makes new measurement infrastructure necessary. Because old metrics do not reflect modern visibility, companies use precise reporting tools to understand their digital footprint. This measurement approach now involves tracking Citation Share of Voice and AI Answer Inclusion Rate. These new metrics show how often large language models pull data from a specific brand.

A successful strategy for SEO uses this hybrid data to establish a continuous iteration loop. When organizations see which facts generative engines extract most often, they update their content structures accordingly. When companies track both conventional clicks and machine citations, they adapt quickly to evolving search interfaces and maintain their competitive advantage.

Conclusion

Companies maintain their competitive advantage when they use integrated search initiatives to ensure sustained visibility across digital interfaces. A unified strategy prevents wasted resources and maximizes overall brand reach when companies break down operational silos between click-based and citation-based optimization teams. As search engines continue to evolve into conversational agents, a hybrid measurement framework ensures that an organization remains resilient against algorithmic shifts. This process requires teams to evaluate current SEO best practices and implement a continuous iteration loop that aligns traditional content structures with artificial intelligence extraction requirements.